Difference between revisions of "Past Projects"

From Immersive Visualization Lab Wiki

(→GreenLight Blackbox (Mabel Zhang, Andrew Prudhomme, Seth Rotkin, Philip Weber, Grant van Horn, Connor Worley, Quan Le, Hesler Rodriguez, 2008-)) |

|||

| (21 intermediate revisions by one user not shown) | |||

| Line 1: | Line 1: | ||

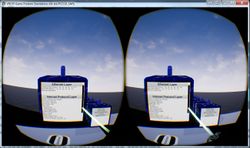

| − | ===[[TelePresence]] (Seth Rotkin, Mabel Zhang, 2010-)=== | + | ===[[Soft Robotic Glove for Haptic Feedback in Virtual Reality]] (Saurabh Jadhav, 2017)=== |

| + | |||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[File:Cover1.jpg]]</td> | ||

| + | <td>User interacting with the haptic glove in Virtual Reality</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

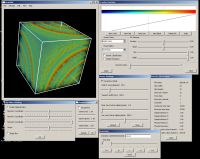

| + | ===[[VOX and Virvo]] (Jurgen Schulze, 1999-2012)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:deskvox.jpg]]</td> | ||

| + | <td>Ongoing development of real-time volume rendering algorithms for interactive display at the desktop (DeskVOX) and in virtual environments (CaveVOX). Virvo is name for the GUI independent, OpenGL based volume rendering library which both DeskVOX and CaveVOX use.<td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Fringe Physics]] (Robert Maloney, 2014-2015)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:PhysicsLab_FinalScene.png|250px]]</td> | ||

| + | <td></td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

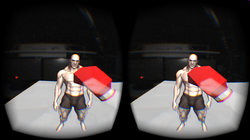

| + | ===[[Boxing Simulator]] (Russell Larson, 2014)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:Boxer.png|250px]]</td> | ||

| + | <td></td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Parallel Raytracing]] (Rex West, 2014)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:NightSky_Frame_Introduction_01.png|250px]]</td> | ||

| + | <td></td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Altered Reality]] (Jonathan Shamblen, Cody Waite, Zach Lee, Larry Huynh, Liz Cai 2013)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:AR.jpg|250px]]</td> | ||

| + | <td></td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Focal Stacks]] (Jurgen Schulze, 2013)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:Coral-thumbnail.png|250px]]</td> | ||

| + | <td>SIO will soon have a new microscope which can generate focal stacks faster than before. We are working on algorithms to visualize and analyze these focal stacks.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

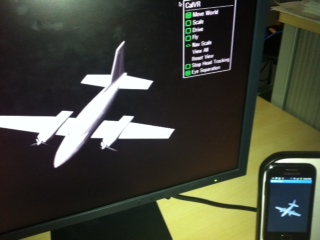

| + | ===[[Android Head Tracking]] (Ken Dang, 2013)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:android-head-tracking2-250.jpg]]</td> | ||

| + | <td>The Project Goal is to create a Android App that would use face detection algorithms to allows head tracking on mobile devices</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Zspace Linux Fix | Automatic Stereo Switcher for the ZSpace]] (Matt Kubasak, Thomas Gray, 2013-2014)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:ZspaceProduct.jpg|250px]]</td> | ||

| + | <td>We created an Arduino-based solution to fix the problem that in Linux the Zspace's left and right views are initially in random order. The Arduino, along with custom software, is used to sense which eye is displayed when, so that CalVR can switch the eyes if necessary, in order to show a correct stereo image.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[ZSculpt | ZSculpt - 3D Sculpting with the Leap]] (Thinh Nguyen, 2013)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:zsculpt-250.jpg]]</td> | ||

| + | <td>The goal of this project is to explore the use of the Leap Motion device for 3D sculpting.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Magic Lens]] (Tony Chan, Michael Chao, 2013)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:MagicLens2.jpg]]</td> | ||

| + | <td>The goal of this project is to research the use of smart phones in a virtual reality environment.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Pose Estimation for a Mobile Device]] (Kuen-Han Lin, 2013)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:kitchen-250.jpg]]</td> | ||

| + | <td>The goal of this project is to develop an algorithm which runs on a PC to estimate the pose of a mobile Android device, linked via wifi.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[StreamingGraphics | Multi-User Graphics with Interactive Control (MUGIC)]] (Shahrokh Yadegari, Philip Weber, Andy Muehlhausen, 2012-)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:TD_performance-250.jpg]]</td> | ||

| + | <td>Allow users simplified and versatile access to CalVR systems via network rendering commands. Users can create computer graphics in their own environments and easily display the output on any CalVR wall or system. [http://www.youtube.com/watch?v=7-q8cl9EUD4&noredirect=1 See the project in action], and [http://www.youtube.com/watch?v=8bS0Borb-f8&noredirect=1 a condensed lecture on the mechanisms.]</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[PanoView360]] (Andrew Prudhomme, Dan Sandin, 2010-)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:panoview-in-tourcave-250.jpg]]</td> | ||

| + | <td>Researchers at UIC/EVL and UCSD/Calit2 have developed a method to acquire very high resolution, surround and stereo panorama images using dual SLR cameras. This VR application allows viewing these approximately gigabyte sized images in real-time and supports real-time changes of the viewing direction and zooming.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[VRDPI | VR Deep Packet Inspector]] (Marlon West, 2017)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[File:Screenshot 2 4-9-17.jpg|thumb|right|250px]]</td> | ||

| + | <td>Explore the network communications in Virtual Reality</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Playstation_Move_UDP_API | MoveInVR]] (Lachlan Smith, 2016)=== | ||

| + | |||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:move-and-eye.jpg|250px]]</td> | ||

| + | <td>If you want to use the Sony Move as a 3D controller for the PC look here.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Rendezvous for Glass]] (Qiwen Gao, 2014)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:google-glass-250.jpg|250px]]</td> | ||

| + | <td></td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Camelot | GoPro Synchronization]] (Thomas Gray, 2013)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:GoProHero2Stereoscopic.jpg|250px]]</td> | ||

| + | <td>The goal of this project is to create a multi-camera video capture system.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[AndroidAR]] (Kristian Hansen, Mads Pedersen, 2012)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:AndroidAR.jpg]]</td> | ||

| + | <td>Tracked Android phones are used to view 3D models and to draw in 3D.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[ArtifactVis with Android Device]] (Sahar Aseeri, 2012)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:b.jpg]]</td> | ||

| + | <td>In this project, an Android device is used to control the ArtifactVis application and to view images of the artifacts on the mobile device.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

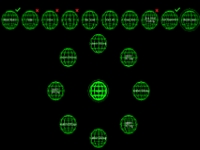

| + | ===[[Bubble Menu]] (Cathy Hughes, 2012)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:BubbleMenuSmall.jpg]]</td> | ||

| + | <td>The purpose of this project is to create a new 3D user interface designed to be controlled using a Microsoft Kinect. The new menu system is based on wireframe spheres designed to be selected using gestures, and it features animated menu transitions, customizable menus, and sound effects.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

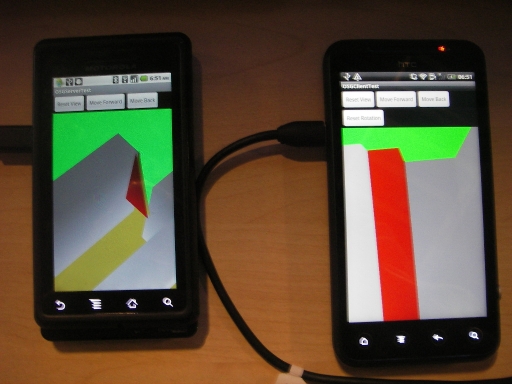

| + | ===[[Multi-user virtual reality on mobile phones]] (James Lue, 2012-2013)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:Multi_users_VR_android_phone2.JPG]]</td> | ||

| + | <td>This project is based on android phones. Users can navigate in a virtual world in the phone and see other users in the virtual world. They can interact with the objects (such as opening doors) in the scene and other users can see the actions The phones are connected by wireless network. Currently it only can support 2 phones to connect to each other</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Mobile Old Town Osaka Viewer]] (Sumin Wang, 2012)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:image-missing.jpg]]</td> | ||

| + | <td>This project aims at creating an Android-based viewer similar to Google Streetview, which allows the user to view a 3D model of old town Osaka, Japan. We collaborate with the Cybermedia Institute of Osaka University on this project.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

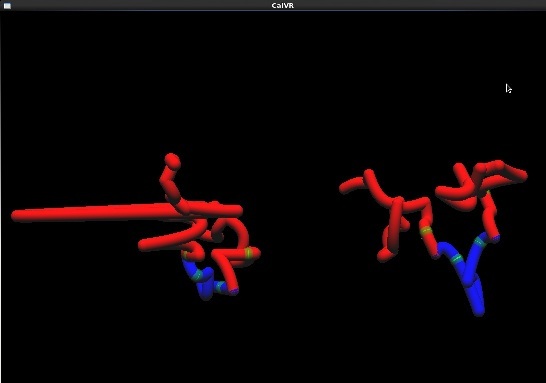

| + | ===[[3D Chromosome Viewer]] (Yixin Zhu, 2012)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:chromosome7_domain.jpg]]</td> | ||

| + | <td>The purpose of this project is to create a three-dimensional chromosome viewer to facilitate the visualization of intra-chromosomal interactions, genomic features and their relationships, and the discovery of new genes.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[CameraFlight]] (William Seo, 2012)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:CameraFlight.png]]</td> | ||

| + | <td>This project's goal is to create automatic camera flights from one place to another in osgEarth.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[AppGlobe Infrastructure]] (Chris McFarland, Philip Weber, 2012)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:AppSwitchMini.png]]</td> | ||

| + | <td>This project's goal is to create an application switcher for osgEarth-based CalVR plugins.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[GUI Sketching Tool]] (Cathy Hughes, Andrew Prudhomme, 2012)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:SketchThumb.jpg]]</td> | ||

| + | <td>This project's goal is to develop a 3D sketching tool for a VR GUI.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[San Diego Wildfires]] (Philip Weber, Jessica Block, 2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:image-missing.jpg]]</td> | ||

| + | <td>The Cedar Fire was a human-caused wildfire which destroyed a large number of buildings and infrastructure in San Diego County in October 2003. This application shows high resolution aerial data of the areas affected by the fires. The data resolution is extremely high at 0.5 meters for the imagery and 2 meters for elevation. Our demonstration shows this data embedded into an osgEarth-based visualization framework, which allows adding such data to any place on our planet and viewing it in a way similar to Google Earth, but with full support for high-end visualization systems.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[ArtifactVis]] (Kyle Knabb, Jurgen Schulze, Connor DeFanti, 2008-2012)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:khirbit.jpg]]</td> | ||

| + | <td>For the past ten years, a joint University of California, San Diego and Department of Antiquities of Jordan research team led by Professor Tom Levy and Dr. Mohammad Najjar has been investigating the role of mining and metallurgy on social evolution from the Neolithic period (ca. 7500 BC) to medieval Islamic times (ca. 12th century AD). Kyle Knabb has been working with the IVL as a master's student under Professor Thomas Levy from the archaeology department. He created a 3D visualization for the StarCAVE which displays several excavation sites in Jordan, along with artifacts found there, and radio carbon dating sites. The data resides in a PostgreSQL data bank with the PostGIS extension, which the VR application uses to pull down the data at run-time. | ||

| + | </td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[OpenAL Audio Server]] (Shreenidhi Chowkwale, Summer 2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:image-missing.jpg]]</td> | ||

| + | <td>A Linux-based audio server that uses the OpenAL API to deliver surround sound to virtual visualization environments.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

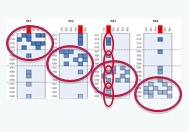

| + | ===[[GreenLight BlackBox 2.0]] (John Mangan, Alfred Tarng, 2011-2012)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:GreenLight.png]]</td> | ||

| + | <td>The SUN Mobile Data Center at UCSD has been equipped with a myriad of sensors within the NSF funded Greenlight project. This virtual reality application attempts to convey the sensor information to the user while retaining spatial information inherent in the data by the arrangement of hardware in the data center. The application links on-line to a data base which collects all sensor information as it becomes available and stores a complete history of it. The VR application can show current energy consumption and temperatures of the hardware in the container, but it can also be used to query arbitrary time windows in the past.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[ScreenMultiViewer]] (John Mangan 2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:image-missing.jpg]]</td> | ||

| + | <td>A display mode within CalVR that allows two users to simultaneously use head trackers within either the StarCAVE or Nexcave, with minimal immersion loss.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

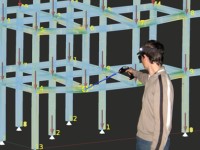

| + | ===[[CaveCAD]] (Lelin Zhang 2009-2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:CaveCAD.jpg]]</td> | ||

| + | <td>Calit2 researcher Lelin ZHANG provides architect designers with pure immersive 3D experience in virtual reality environment of StarCAVE. | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===Neuroscience and Architecture (Daniel Rohrlick, Michael Bajorek, Mabel Zhang, Lelin Zhang 2007-2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:neuroscience.jpg]]</td> | ||

| + | <td>This projects started off as a Calit2 seed funded project to do a pilot study with the Swartz Center for Neuroscience in which a human subject has to find their way to specific locations in the Calit2 building while their brain waves are being scanned by a high resolution EEG. Michael's responsibility was the interface between the StarCAVE and the EEG system, to transfer tracker data and other application parameters to allow for the correlation of EEG data with VR parameters. Daniel created the 3D model of the New Media Arts wing of the building using 3ds Max. Mabel refined the Calit2 building geometry. This project has been receiving funding from HMC.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Android Navigator]] (Brooklyn Schlamp, Summer 2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:image-missing.jpg]]</td> | ||

| + | <td>This project implements an Android phone based navigaton tool for the CalVR environment.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===Ground Penetrating Radar (Philip Weber, Albert Lin, 2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:image-missing.jpg]]</td> | ||

| + | <td>Calit2's Albert Yu-Min Lin has been named to the 2010 class of National Geographic Emerging Explorers. His current research interest is to find the tomb of Genghis Khan in Mongolia, albeit without physically turning a single rock, but only by analyzing a variety of data modalities such as satellite imagery, aerial photography, and sub-surface radar. This demonstration shows three scanning modalities in one visualization application: magnetic, electro-magnetic, and ground penetrating radar. The latter is able to penetrate the ground the deepest, so we chose to focus on it when it comes to visualizing depth data. Spheres with a depth-based color scheme are used to visualize the data. The user can interactively select how deep they want to go under ground, and then fly around to examine the data and put it in perspective with the other two modalities.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[3D Reconstruction of Photographs ]] (Matthew Religioso, 2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:Wiki.jpg]]</td> | ||

| + | <td width=20></td> | ||

| + | <td>Reconstruct static objects from photographs into 3D models using the Bundler Algorithm and the Texturing Algorithm developed by prior students. This project's goal is to optimize the Texturing to maximize photorealism and efficiency, and run the resulting application in the StarCAVE. </td> | ||

| + | <td width=20></td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Real-Time Geometry Scanning System]] (Daniel Tenedorio, 2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:dtenedor-wiki-icon.png]]</td> | ||

| + | <td width=20></td> | ||

| + | <td>This interactive system constructs a 3D model of the environment as a user moves an infrared geometry camera around a room. We display the intermediate representation of the scene in real-time on virtual reality displays ranging from a single computer monitor to immersive, stereoscopic projection systems like the StarCAVE.</td> | ||

| + | <td width=20></td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Real-Time Meshing of Dynamic Point Clouds]] (Robert Pardridge, James Lue, 2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:Marchingcubes.png]]</td> | ||

| + | <td>This project involves generating a triangle mesh in over a point cloud that grows dynamically. The goal is to implement a meshing algorithm that is fast enough to keep up with the streaming input from a scanning device. We are using a CUDA implementation of the Marching Cubes algorithm to triangulate in real-time a point cloud obtained from the Kinect depth-camera. </td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

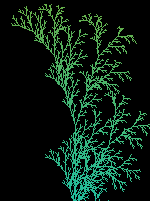

| + | ===[[LSystems]] (Sarah Larsen 2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:LSystems2.png]]</td> | ||

| + | <td>Creates an LSystem and displays it with either line or cylinder connections</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Android Controller]] (Jeanne Wang 2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:androidscreenshot.png]]</td> | ||

| + | <td>An Android based controller for a visualization system such as StarCave or a multiscreen grid.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Object-Oriented Interaction with Large High Resolution Displays]] (Lynn Nguyen 2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:Lynn_selected.jpg]]</td> | ||

| + | <td>Investigate the practicality of using smartphones to interact with large high resolution displays. To accomplish such a | ||

| + | task, it is not necessary to find the spatial location of the phone relative to the display, rather we can identify the object a user wants to interact with through image recognition. The interaction with the object itself can be done by using the smart-phone as the medium. The feasibility of this concept is investigated by implementing a prototype.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

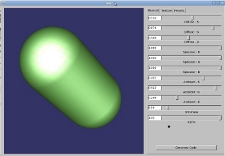

| + | ===[[MatEdit]] (Khanh Luc, 2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:Screenshot_MaterialEditor_small.jpg]]</td> | ||

| + | <td>A graphical user interface for programmers to adjust and preview material properties on an object. Once a programmer determines the proper parameters for the look of his material, he can then generate code to achieve that look</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[Kinect UI for 3D Pacman]] (Tony Lu, 2011)=== | ||

| + | <table> | ||

| + | <tr> | ||

| + | <td>[[Image:Pacmanscreenshot_small.png]]</td> | ||

| + | <td>An experimentation with the Kinect to implement a device free, gesture controlled user interface in the StarCAVE to run a 3D Pacman game.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

| + | |||

| + | ===[[TelePresence]] (Seth Rotkin, Mabel Zhang, 2010-2011)=== | ||

<table> | <table> | ||

<tr> | <tr> | ||

Latest revision as of 00:31, 15 December 2020

Soft Robotic Glove for Haptic Feedback in Virtual Reality (Saurabh Jadhav, 2017)

|

User interacting with the haptic glove in Virtual Reality |

VOX and Virvo (Jurgen Schulze, 1999-2012)

Fringe Physics (Robert Maloney, 2014-2015)

|

Boxing Simulator (Russell Larson, 2014)

|

Parallel Raytracing (Rex West, 2014)

|

Altered Reality (Jonathan Shamblen, Cody Waite, Zach Lee, Larry Huynh, Liz Cai 2013)

|

Focal Stacks (Jurgen Schulze, 2013)

|

SIO will soon have a new microscope which can generate focal stacks faster than before. We are working on algorithms to visualize and analyze these focal stacks. |

Android Head Tracking (Ken Dang, 2013)

| The Project Goal is to create a Android App that would use face detection algorithms to allows head tracking on mobile devices |

Automatic Stereo Switcher for the ZSpace (Matt Kubasak, Thomas Gray, 2013-2014)

ZSculpt - 3D Sculpting with the Leap (Thinh Nguyen, 2013)

|

The goal of this project is to explore the use of the Leap Motion device for 3D sculpting. |

Magic Lens (Tony Chan, Michael Chao, 2013)

|

The goal of this project is to research the use of smart phones in a virtual reality environment. |

Pose Estimation for a Mobile Device (Kuen-Han Lin, 2013)

|

The goal of this project is to develop an algorithm which runs on a PC to estimate the pose of a mobile Android device, linked via wifi. |

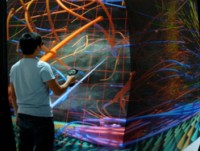

Multi-User Graphics with Interactive Control (MUGIC) (Shahrokh Yadegari, Philip Weber, Andy Muehlhausen, 2012-)

|

Allow users simplified and versatile access to CalVR systems via network rendering commands. Users can create computer graphics in their own environments and easily display the output on any CalVR wall or system. See the project in action, and a condensed lecture on the mechanisms. |

PanoView360 (Andrew Prudhomme, Dan Sandin, 2010-)

VR Deep Packet Inspector (Marlon West, 2017)

| Explore the network communications in Virtual Reality |

MoveInVR (Lachlan Smith, 2016)

|

If you want to use the Sony Move as a 3D controller for the PC look here. |

Rendezvous for Glass (Qiwen Gao, 2014)

|

GoPro Synchronization (Thomas Gray, 2013)

|

The goal of this project is to create a multi-camera video capture system. |

AndroidAR (Kristian Hansen, Mads Pedersen, 2012)

|

Tracked Android phones are used to view 3D models and to draw in 3D. |

ArtifactVis with Android Device (Sahar Aseeri, 2012)

|

In this project, an Android device is used to control the ArtifactVis application and to view images of the artifacts on the mobile device. |

Bubble Menu (Cathy Hughes, 2012)

Multi-user virtual reality on mobile phones (James Lue, 2012-2013)

Mobile Old Town Osaka Viewer (Sumin Wang, 2012)

3D Chromosome Viewer (Yixin Zhu, 2012)

CameraFlight (William Seo, 2012)

|

This project's goal is to create automatic camera flights from one place to another in osgEarth. |

AppGlobe Infrastructure (Chris McFarland, Philip Weber, 2012)

|

This project's goal is to create an application switcher for osgEarth-based CalVR plugins. |

GUI Sketching Tool (Cathy Hughes, Andrew Prudhomme, 2012)

|

This project's goal is to develop a 3D sketching tool for a VR GUI. |

San Diego Wildfires (Philip Weber, Jessica Block, 2011)

ArtifactVis (Kyle Knabb, Jurgen Schulze, Connor DeFanti, 2008-2012)

OpenAL Audio Server (Shreenidhi Chowkwale, Summer 2011)

|

A Linux-based audio server that uses the OpenAL API to deliver surround sound to virtual visualization environments. |

GreenLight BlackBox 2.0 (John Mangan, Alfred Tarng, 2011-2012)

ScreenMultiViewer (John Mangan 2011)

|

A display mode within CalVR that allows two users to simultaneously use head trackers within either the StarCAVE or Nexcave, with minimal immersion loss. |

CaveCAD (Lelin Zhang 2009-2011)

|

Calit2 researcher Lelin ZHANG provides architect designers with pure immersive 3D experience in virtual reality environment of StarCAVE. |

Neuroscience and Architecture (Daniel Rohrlick, Michael Bajorek, Mabel Zhang, Lelin Zhang 2007-2011)

|

This project implements an Android phone based navigaton tool for the CalVR environment. |

Ground Penetrating Radar (Philip Weber, Albert Lin, 2011)

3D Reconstruction of Photographs (Matthew Religioso, 2011)

Real-Time Geometry Scanning System (Daniel Tenedorio, 2011)

Real-Time Meshing of Dynamic Point Clouds (Robert Pardridge, James Lue, 2011)

LSystems (Sarah Larsen 2011)

|

Creates an LSystem and displays it with either line or cylinder connections |

Android Controller (Jeanne Wang 2011)

|

An Android based controller for a visualization system such as StarCave or a multiscreen grid. |

Object-Oriented Interaction with Large High Resolution Displays (Lynn Nguyen 2011)

MatEdit (Khanh Luc, 2011)

Kinect UI for 3D Pacman (Tony Lu, 2011)

|

An experimentation with the Kinect to implement a device free, gesture controlled user interface in the StarCAVE to run a 3D Pacman game. |

TelePresence (Seth Rotkin, Mabel Zhang, 2010-2011)

GreenLight Blackbox (Mabel Zhang, Andrew Prudhomme, Seth Rotkin, Philip Weber, Grant van Horn, Connor Worley, Quan Le, Hesler Rodriguez, 2008-2011)

|

We created a 3D model of the SUN Mobile Data Center which is a core component of the instrument procured by the GreenLight project. We added an on-line connection to the physical container to display the output of the power modules. The project was demonstrated at SIGGRAPH, ISC, and Supercomputing. |

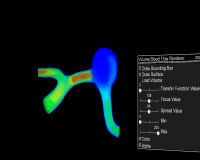

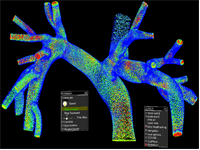

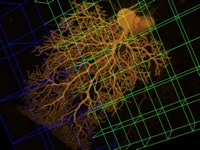

Volumetric Blood Flow Rendering (Yuri Bazilevs, Jurgen Schulze, Alison Marsden, Greg Long, Han Kim 2011)

Meshing and Texturing Point Clouds (Robert Pardridge, Vikash Nandkeshwar, James Lue, 2011)

|

These students are using data from the previous PhotosynthVR project to create 3D geometry and textures. |

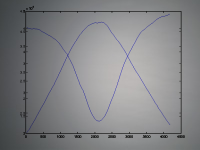

BloodFlow (Yuri Bazilevs, Jurgen Schulze, Alison Marsden, Ming-Chen Hsu, Kenneth Benner, Sasha Koruga; 2009-2010)

|

In this project, we are working on visualizing the blood flow in an artery, as simulated by Professor Bazilev at UCSD. Read the Blood Flow Manual for usage instructions. Videos and pictures of the visualizations in 2D can be found here, and the corresponding iPhone versions of the videos can be downloaded here. |

PanoView360 (Andrew Prudhomme, 2008-2010)

|

In collaboration with Professor Dan Sandin from EVL, Andrew created a COVISE plugin to display photographer Richard Ainsworth's panoramic stereo images in the StarCAVE and the Varrier. |

PhotosynthVR (Sasha Koruga, Haili Wang, Phi Nguyen, Velu Ganapathy; 2009)

|

UCSD Sasha Koruga has created a Photosynth-like system with which he can display a number of photographs in the StarCAVE. The images appear and disappear as the user moves around the photographed object. Read the PhotosynthVR Manual for usage instructions. |

Multi-Volume Rendering (Han Kim, 2009)

|

The goal of multi-volume rendering is to visualize multiple volume data sets. Each volume has three or more channels. |

How Much Information (Andrew Prudhomme, 2008-2009)

|

In this project we visualize the data from various collaborating companies which provide us with data stored on harddisks or data transferred over networks. In the first stage, Andrew created an application which can display the directory structures of 70,000 harddisk drives of Microsoft employees, sampled over the course of five years. The visualization uses an interactive hyperbolic 3D graph to visualize the directory trees and to compare different users' trees, and it uses various novel data display methods like wheel graphs to display file sizes, etc. More information about this project can be found at [1]. |

Hotspot Mitigation (Jordan Rhee, 2008-2009)

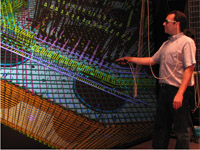

ATLAS in Parallel (Ruth West, Daniel Tenedorio, Todd Margolis, 2008-2009)

Animated Point Clouds (Daniel Tenedorio, Rachel Chu, Sasha Koruga, 2008)

6DOF Tracking with Wii Remotes (Sage Browning, Philip Weber, 2008)

Spatialized Sound (Toshiro Yamada, Suketu Kamdar, 2008)

Video in Virtual Environments (Han Kim, 2008-2010)

LOOING/ORION (Philip Weber, 2007-2009)

OssimPlanet (Philip Weber, Jurgen Schulze, 2007)

|

In this project we ported the open source OssimPlanet library to COVISE, so that it can run in our VR environments, including the Varrier tiled display wall and the StarCAVE. |

CineGrid (Leo Liu, 2007)

Virtual Calit2 Building (Daniel Rohrlick, Mabel Zhang, 2006-2009)

Interaction with Multi-Spectral Images (Philip Weber, Praveen Subramani, Andrew Prudhomme, 2006-2009)

Finite Elements Simulation (Fabian Gerold, 2008-2009)

Palazzo Vecchio (Philip Weber, 2008)

Virtual Architectural Walkthroughs (Edward Kezeli, 2008)

NASA (Andrew Prudhomme, 2008)

Digital Lightbox (Philip Weber, 2007-2008)

Research Intelligence Portal (Alex Zavodny, Andrew Prudhomme, 2007-2008)

New San Francisco Bay Bridge (Andre Barbosa, 2007-2008)

Birch Aquarium (Daniel Rohrlick, 2007-2008)

CAMERA Meta-Data Visualization (Sara Richardson, Andrew Prudhomme, 2007-2008)

Depth of Field (Karen Lin, 2007)

HD Camera Array (Alex Zavodny, Andrew Prudhomme, 2007)

Atlas in Silico for Varrier (Ruth West, Iman Mostafavi, Todd Margolis, 2007)

Screen (Noah Wardrip-Fruin, 2007)

|

Under the guidance of Noah Wardrip-Fruin and Jurgen Schulze, Ava Pierce, David Coughlan, Jeffrey Kuramoto, and Stephen Boyd are adapting the multimedia art installation Screen from the four-wall cave system at Brown University to the StarCAVE. This piece was displayed at SIGGRAPH 2007 and was the first virtual reality application to demoed in the StarCAVE. It was also displayed at the Beall Center at UC Irvine in the fall of 2007. For this purpose, it was ported to a single stereo wall display. |

Children's Hospital (Jurgen Schulze, 2007)

|

From our collaboration with Dr. Peter Newton from San Diego's Children's Hospital we have a few computer tomography (CT) data sets of childerens' upper bodies, showing irregularities of their spines. |

Super Browser (Vinh Huynh, Andrew Prudhomme, 2006)

Cell Structures (Iman Mostafavi, 2006)

Terashake Volume Visualization (Jurgen Schulze, 2006)

|

As part of the NSF funded Optiputer project, Jurgen visualized part of the 4.5 terabyte TeraShake earthquake data set on a the 100 megapixel LambdaVision display at Calit2. For this project, he integrated his volume visualization tool VOX into EVL's SAGE. |

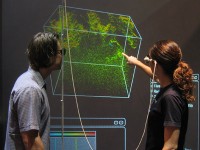

Protein Visualization (Philip Weber, Andrew Prudhomme, Krishna Subramanian, Sendhil Panchadsaram, 2005-2009)

|

A VR application to view protein structures from UCSD Professor Philip Bourne's Protein Data Bank (PDB). The popular molecular biology toolkit PyMol is used to create the 3D models of the PDB files. Our application also supports protein alignment, an aminoacid sequence viewer, integration of TOPSAN annotations, as well as a variety of visualization modes. Among the users of this application are: UC Riverside (Peter Atkinson), UCSD Pharmacy (Zoran Radic), Scripps Research Institute (James Fee/Jon Huntoon). |

Earthquake Visualization (Jurgen Schulze, 2005)

|

Along with Debi Kilb from the Scripps Institution of Oceanography (SIO) we visualized 3D earthquake locations on a world-wide scale. |