Fringe Physics

Contents |

Project Overview

The concept of this project was to create a room with a physics-enabled ballpit in the center. The user would have the ability to interact with the balls by picking them up and moving them to certain stations. These stations can be anything from antigravity fields and seesaws to trampolines and infintely dense miniplanets, and is solely dependent on the creativity of the individual. The next iteration of the project involved designing the mechanics of a Rube Goldberg machine puzzle solver. The user would have the ability to rearrange pieces in order to get spheres into a bucket.

Project Goals

- Integrate the Bullet Engine to OpenSceneGraph by linking the two worlds

- Encapsulate a system of object creation and physics handling

- Enabled user interactions using OSG intersection

- Integrate system with zSpace for enhanced immersion

- Allow for puzzle solving elements

- Integrate custom geometry into the Bullet simulation

Developers

Software Developer

- Robert Maloney

Project Adviser

- Jurgen Schulze

Technologies

- CalVR

- OpenSceneGraph v3.0.1

- Bullet Engine v2.8.1

- ZSpace

First Iteration

Basic Scene

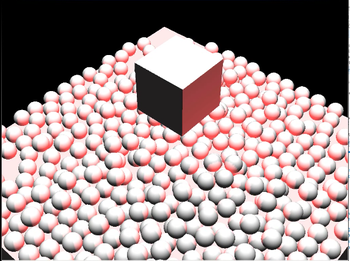

The first step was to create a scene with primitive objects for initial testing: a small box for our manipulated object, and a bigger box pushed down by its halflength to serve as our ground. Once this scene was in place, the physics engine had to be integrated.

The Bullet Engine is a completely separate entity from OpenSceneGraph, but both systems have very similar frameworks that allow us to easily connect the two. By making a static box equal to the ground box, and a kinematic box equal to the smaller rendered box, we can simulate physics by synchronizing the matrices of the two worlds. I added a bit more functionality by allowing the physics box to be manipulated by the arrow keys and spacebar, effectively forcing the rendered box to slide and hop.

Once this basic simulation was achieved, the next step was to create a system by which one could generate objects with a few important parameters. This system would completely handle the rendering and updating of its objects by linking the rendered and physical world, and simply return Nodes for adding to the scene. This was created as an ObjectFactory and a BulletHandler. The ObjectFactory creates the object based on the user's preference, and then signals the BulletHandler to create its physical counterpart. Before every frame, the rendered world's matrices are synched with the physical world.

In order to see both the full power of the Bullet Engine and a prototype of the ballpit, I simulated a volume of 400 spheres. Once the simulation has begun, the spheres go into action, dispersing and filling the low end of the scene. An invisible wall has also been implemented to contain the objects in the scene.

Antigravity Field

The next step of the project involved creating the stations. My first station was the antigravity field, because the first thing I wanted to do with a physics engine was defy it. The Antigravity Field revolves around the functionality of a special object in the Bullet Engine, the btGhostObject. It does not create impulses against colliding objects, like the spheres do, but still keeps track of collisions in an AABB.

By attaching a Vec3 to the btGhostObject, I was able to create a basic Antigravity Field. On every frame, the AGFs check for collisions. For all dynamic objects inside the AABB, the AGF's gravity vector is applied. In order to create the illusion of realistic gravity when outside these fields, there is actually an AGF encompassing all the others. Coupling it with a hierarchical algorithm gives us a deterministic way of applying gravity. In future iterations, I am going to change the AGFs to add to gravity instead of write it.

Seesaw

The second station I created was a seesaw, which is quite simple with the Bullet Engine. The engine allows us to define a hinge constraint that limits rotation of the object. In order to abstract from manually defining the hinge vector for each object, the ObjectFactory determines the hinge between the smallest dimensions for x-axis and y-axis.

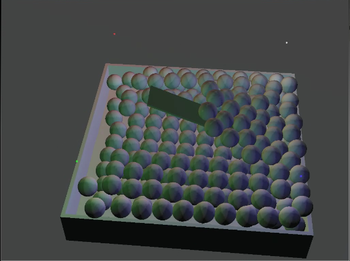

ZSpace and Interaction

The final step was to integrate the scene into ZSpace with interaction from the stylus. I had originally attempted to solve this problem using the same btGhostObject that I used for the AGFs. However, that proved to be unsuccessful because of how the Bullet Engine handles collisions. I was lucky enough to create the Antigravity Fields as AABBs, so the Bullet Engine handled them perfectly, as long as they weren't rotated. However, Bullet Engine uses Broadphase collision detection, which utilizes bounding volumes to efficiently compute collisions. It also has narrowphase for more realistic collision, but ghost objects do not use narrowphase. This resulted in a huge box containing the stylus, causing the algorithm to pick up spheres straight down from the stylus origin.

In order to solve this, I switch to an implementation by OSG, the IntersectVisitor. It allows me to define a line segment in the center of the stylus, and send this segment through the entire scene graph, returning all nodes that intersect it. Combining this implementation with a closest-object search allowed me to select the object I wanted to grab. In order to preserve its location relative to the stylus, I saved the distance in a vector and reapplied it every time the stylus' position and rotation were updated. This gives the user a realistic feel of picking up objects, rather than them teleporting to a predifined point on the stylus. In order to help isolate the grabbed object from the rest, the one selected becomes black for the duration of the grab.

Second Iteration

The Puzzle Solver

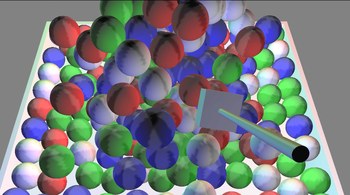

Our next objective was to use this new functionality to implement a minigame. The user would move ramps and other pieces into a formation in order to get spheres into a bucket at the end of the map.

Refactoring

My first step was to refactor some of the naive code I made in the first iteration. Specifically, I was repeating the exact same piece of code whenever I made a new physics object. Encapsulating that functionality cut the BulletHandler by 50 lines. Additionally, I remade some of the composite objects such as the hollow box and the ball pit. Instead of creating the triangles from scratch, I made the geometry from a series of boxes. This also helped when OSG was updated, because there were some new compilation errors as a result of deprecated functions.

Collision Filtering

One of the first new features of this iteration was collision filtering. In order to make the puzzle easier to solve, I decided to restrict the game winning spheres to a volume with a small depth. This as allows me to restrict users from simply grabbing spheres and inserting them into the win bucket. This also allows the user to focus on an approximate plane of movement rather than a whole volume. In order to implement this, I used the collision filtering system in the Bullet Engine. When a rigid body is created, I specified its collision mask and the masks that it will collide with. Using this, I was able to make invisible walls that restrict the movement of the spheres while allowing other objects, like ramps, to move freely within the space.

Custom Geometry

In order to make the game more interesting to look at, and more fun, I decided to create a system for importing custom geometry into the scene. This problem was the most intricate of this iteration, because the ReadNode method in OSG did not give any access to the triangle data. The solution involved a NodeVisitor that traversed the node tree of a parsed file. Each drawable is passed into a TriangleFunctor, which analyzes the triangle data, converts it to world space, and pushes it into a vector of triangles. This vector can then be requested and passed into the physics handler, which will construct a triangle mesh with the data.

There are two classes in the Bullet engine involved with constructing a mesh with triangle data: btBvhTriangleMeshShape and btConvexTriangleMeshShape. btBvhTriangleMeshShape was organized in a way that allowed it to do efficient narrowphase collision detection, but at the cost of forbidding local rotation of the mesh. Another benefit of this implementation was a sister object, btScaledBvhTriangleMeshShape, which made it much easier to scaled the physics mesh alongside the graphic one. btConvexTriangleMeshShape allowed for local rotation, but could not do narrowphase collision detection, and resorted to AABB detection. I ended up implementing a hybrid system based on my existed code; because I already specified whether or not I wanted the object to be affected by gravity and other impulses, I used that passed boolean to pick between the two classes. It is apparent, however, that the narrowphase collision detection is more important than the local rotation, even more than the loss of immersion you get when objects are simply floating in the air.

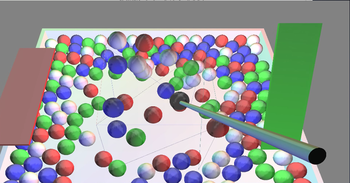

Grabbing Objects

The code I originally made to grab objects made a couple of assumptions as a result of complete control over the primitives. The first assumption was that the only matrix transformations existed from the camera and from the individual objects. However, upon importing custom geometry, it became apparent that there was a longer chain of matrices between the intersection hit data and the object's matrix. This was specifically causing an issue when I tried to derive the offset between the intersection and the objects origin, and when I tried to find the corresponding physics objects. Another assumption was that the objects it hit had unit scale. This was fine on the first iteration, because I could algorithmically make any sized shape, but when I integrated custom geometry I had to add scaling. The hit data would return an intersection with unit scale, which would cause the objects to jerk to a point inside the volume when grabbed. The final assumption, again related to the addition of custom geometry, was that the method was initially designed to only grab ShapeDrawables. However, it would not even attempt to pick up Geometry nodes.

Ultimately, all of the code after the initial hit had to be reworked. However, the new implementation is much more robust. It makes a single matrix called grabbedMatrixOffset, which is the object's matrix in world space. It then multiplies that by the inverse of the hand matrix. This creates a "delta" matrix, in that this new matrix is the relative transform from the stylus to the object. When the hand is updated, this matrix is then multiplied by the new hand matrix, which results in the new object matrix in world space. Finally, this matrix is shifted into camera space.