From Immersive Visualization Lab Wiki

|

|

| Line 30: |

Line 30: |

| | <hr> | | <hr> |

| | | | |

| − | ===[[StreamingGraphics | Streaming Graphics]] (Nadia Zeng, 2012-)=== | + | ===[[StreamingGraphics | Multi-User Graphics with Interactive Control (MUGIC)]] (Nadia Zeng, 2012-)=== |

| | <table> | | <table> |

| | <tr> | | <tr> |

Revision as of 23:02, 20 September 2012

Contents

- 1 Past Projects

- 2 Active Projects

- 2.1 AndroidAR (Kristian Hansen, Mads Pedersen, 2012)

- 2.2 ArtifactVis with Android Device (Sahar Aseeri, 2012)

- 2.3 Bubble Menu (Cathy Hughes, 2012-)

- 2.4 Multi-User Graphics with Interactive Control (MUGIC) (Nadia Zeng, 2012-)

- 2.5 Multi-user virtual reality on mobile phones (James Lue, 2012-)

- 2.6 Mobile Old Town Osaka Viewer (Sumin Wang, 2012-)

- 2.7 3D Chromosome Viewer (Yixin Zhu, 2012-)

- 2.8 CameraFlight (William Seo, 2012-)

- 2.9 AppGlobe Infrastructure (Chris McFarland, Philip Weber, 2012)

- 2.10 GUI Sketching Tool (Cathy Hughes, Andrew Prudhomme, 2012)

- 2.11 San Diego Wildfires (Philip Weber, Jessica Block, 2011)

- 2.12 PanoView360 (Andrew Prudhomme, Dan Sandin, 2010-)

- 2.13 ArtifactVis (Kyle Knabb, Jurgen Schulze, Connor DeFanti, 2008-)

- 2.14 OpenAL Audio Server (Shreenidhi Chowkwale, Summer 2011)

- 2.15 GreenLight BlackBox 2.0 (John Mangan, Alfred Tarng, 2011-)

- 2.16 VOX and Virvo (Jurgen Schulze, 1999-)

|

Active Projects

AndroidAR (Kristian Hansen, Mads Pedersen, 2012)

|

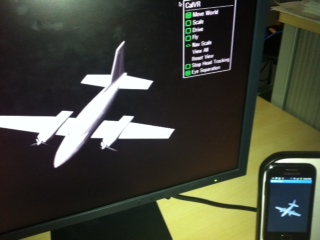

Tracked Android phones are used to view 3D models and to draw in 3D. |

|

In this project, an Android device is used to control the ArtifactVis application and to view images of the artifacts on the mobile device. |

|

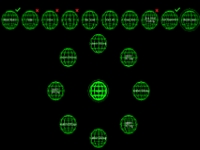

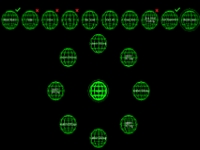

The purpose of this project is to create a new 3D user interface designed to be controlled using a Microsoft Kinect. The new menu system is based on wireframe spheres designed to be selected using gestures, and it features animated menu transitions, customizable menus, and sound effects. |

|

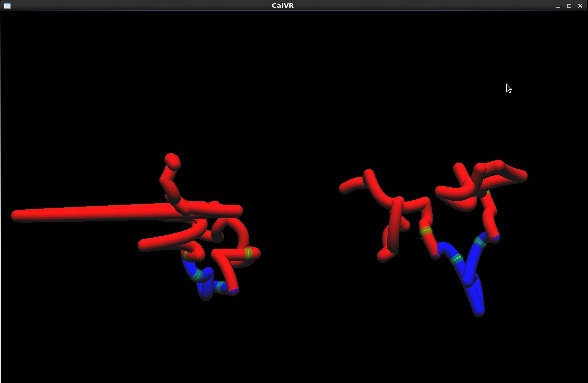

This project allows artists without programming background to render images with CalVR, essentially allowing them to express their artworks and creativity in a much larger scale. |

|

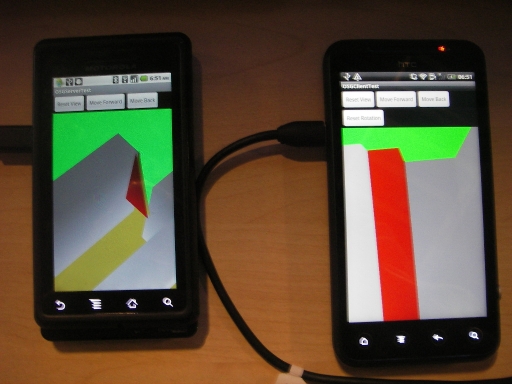

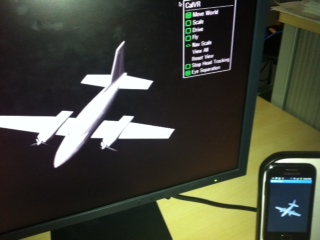

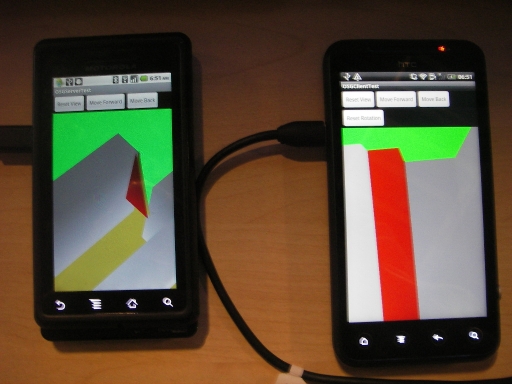

This project is based on android phones. Users can navigate in a virtual world in the phone and see other users in the virtual world. They can interact with the objects (such as opening doors) in the scene and other users can see the actions The phones are connected by wireless network. Currently it only can support 2 phones to connect to each other |

|

This project aims at creating an Android-based viewer similar to Google Streetview, which allows the user to view a 3D model of old town Osaka, Japan. We collaborate with the Cybermedia Institute of Osaka University on this project. |

|

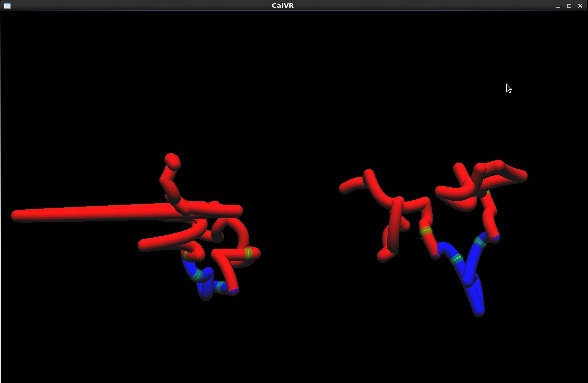

The purpose of this project is to create a three-dimensional chromosome viewer to facilitate the visualization of intra-chromosomal interactions, genomic features and their relationships, and the discovery of new genes. |

|

This project's goal is to create automatic camera flights from one place to another in osgEarth. |

|

This project's goal is to create an application switcher for osgEarth-based CalVR plugins. |

GUI Sketching Tool (Cathy Hughes, Andrew Prudhomme, 2012)

|

This project's goal is to develop a 3D sketching tool for a VR GUI. |

|

The Cedar Fire was a human-caused wildfire which destroyed a large number of buildings and infrastructure in San Diego County in October 2003. This application shows high resolution aerial data of the areas affected by the fires. The data resolution is extremely high at 0.5 meters for the imagery and 2 meters for elevation. Our demonstration shows this data embedded into an osgEarth-based visualization framework, which allows adding such data to any place on our planet and viewing it in a way similar to Google Earth, but with full support for high-end visualization systems. |

PanoView360 (Andrew Prudhomme, Dan Sandin, 2010-)

|

Researchers at UIC/EVL and UCSD/Calit2 have developed a method to acquire very high resolution, surround and stereo panorama images using dual SLR cameras. This VR application allows viewing these approximately gigabyte sized images in real-time and supports real-time changes of the viewing direction and zooming. |

ArtifactVis (Kyle Knabb, Jurgen Schulze, Connor DeFanti, 2008-)

|

For the past ten years, a joint University of California, San Diego and Department of Antiquities of Jordan research team led by Professor Tom Levy and Dr. Mohammad Najjar has been investigating the role of mining and metallurgy on social evolution from the Neolithic period (ca. 7500 BC) to medieval Islamic times (ca. 12th century AD). Kyle Knabb has been working with the IVL as a master's student under Professor Thomas Levy from the archaeology department. He created a 3D visualization for the StarCAVE which displays several excavation sites in Jordan, along with artifacts found there, and radio carbon dating sites. The data resides in a PostgreSQL data bank with the PostGIS extension, which the VR application uses to pull down the data at run-time.

|

|

A Linux-based audio server that uses the OpenAL API to deliver surround sound to virtual visualization environments. |

|

The SUN Mobile Data Center at UCSD has been equipped with a myriad of sensors within the NSF funded Greenlight project. This virtual reality application attempts to convey the sensor information to the user while retaining spatial information inherent in the data by the arrangement of hardware in the data center. The application links on-line to a data base which collects all sensor information as it becomes available and stores a complete history of it. The VR application can show current energy consumption and temperatures of the hardware in the container, but it can also be used to query arbitrary time windows in the past. |

|

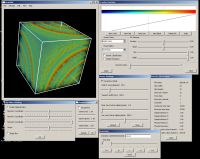

Ongoing development of real-time volume rendering algorithms for interactive display at the desktop (DeskVOX) and in virtual environments (CaveVOX). Virvo is name for the GUI independent, OpenGL based volume rendering library which both DeskVOX and CaveVOX use. |

|