Discussion2S16

Contents |

Overview

Week 2 discussion recap (04/04/16)

Slides: download here

The Problem with Rasterization

For homework 1 part 4, we're going to be rasterizing 3D points manually, as opposed to relying on OpenGL for it. What is rasterization? It's the process of taking a 3D scene description, and converting it into 2D image coordinates so that we can draw it on screen.

Let's say that we have a vertex p in 3d space (2.5, 1.5, -1). In homogeneous coordinates, this will be represented as follows:

Now when we try to find out what the pixel coordinates for this point would be, we immediately run into two issues:

- Our screen is a 2d coordinate system, but our point is in 3d!

- Where on the screen would (2.5, 1.5, -1) even be?

In short, we have a mismatch in coordinate systems!

How to Solve the Problem

The full equation for transforming from the 3d world(technically what we call object space) to 2d pixel coordinates(image space) is described in homework 1 and reprinted below:

Let's break each of the components down here, starting from right to left.

Object Space

The original vertex p starts in object space. This is the coordinate system that is inherent to our object, in this case the vertex.

World Space

World space is the space we are in after our transformations have been applied to the object. This allows us to have multiple objects coexist, and share the same world. This is usually written as M for model.

By this point we actually already know how to do world space transformations! We've already done this in the previous parts of homework 1. When we were transforming—translating, scaling, orbiting—the cube, we were already changing from object space to world space. If you're not done with the transformation portion of the homework yet, look at the cube code below:

void Cube::spin(float deg)

{

this->angle += deg;

if (this->angle > 360.0f || this->angle < -360.0f) this->angle = 0.0f;

// This creates the matrix to rotate the cube

this->toWorld = glm::rotate(glm::mat4(1.0f), this->angle / 180.0f * glm::pi<float>(), glm::vec3(0.0f, 1.0f, 0.0f));

}

Notice how cube's spin—which you've all seen work— simply changes one member variable this->toWorld at the end? The toWorld matrix is what we call our model matrix, and it is what takes us from object space to world space(hence the name toWorld).

However, there is a problem, that we've purposefully left in, with how the spin method is modifying this->toWorld here. Did you spot it when you were working on the parts before part 4 of homework 1?

Transformation Order

The order in which we apply our transformations to toWorld matters! Before we dive in, know that unlike words, we read matrix multiplication order from right to left.

Take a look at the following two orders.

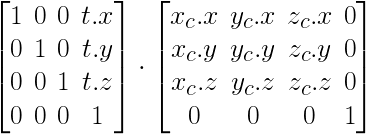

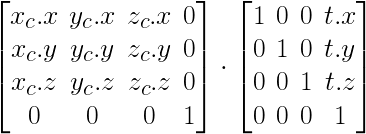

- Translation then Rotation

- First, note how the rotation matrix is on the right side, but the sequence of order here is rotation first, then translation second. This is because we read matrix multiplication order from right to left.

- Note how the resulting matrix looks very much like a simple concatenation of the rotation and translation matrices, and how this differs from the next order's resulting matrix.

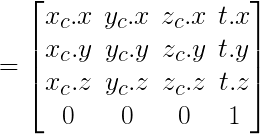

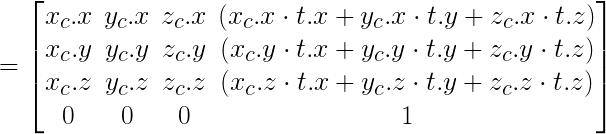

- Rotation then Translation

- Again, note how the translation matrix is on the right side, but the sequence of order is read translation first, then rotation second.

- In the resulting matrix, the translation portion of the matrix(the 4th column) looks much more complicated then the ordering from above. This is because the translation is also affected by the rotation that happened after.

So which do you think is the more desirable order of multiplying things?

Camera Space

Camera Space describes how the world looks in relation to the camera, or said differently the world with the camera as the reference point. This is usually written as C for Camera. An important point to note is that we actually require the inverse of C.

Why do we need the inverse? Good question! Think about moving the camera in the positive direction by 20. If we think about that camera as our reference point, to the camera it would seem that the other objects of the world are moving in the negative direction by 20, or in other words, the inverse direction of the camera's translation. Remember that we're applying these transformations to p, our vertex, which is an object of the world. Hence, we need to apply the inverse transformation of the camera in our rasterization equation.

So how do we create this matrix? Well, lets first see how we did it in OpenGL.

If you look at Window.cpp's Window::resize_callback you'll find the line:

glTranslatef(0, 0, -20);

This is exactly the example I gave above. glTranslatef moves the objects of the world. So now we can imagine that our camera is actually in (0, 0, 20). We call this vector e for eye. The direction that the camera is facing is called d. Usually this will be set to the origin (0, 0, 0). Finally, the camera needs to know where up is, to complete the three basis vectors of the space. This is in most cases a normal pointing towards positive Y: (0, 1, 0). Using these three vectors, we can actually consult the convenient glm::lookAt function. You can use this function like such:

C_inverse = glm::lookAt(e, d, up);