Discussion2S16

Contents |

Overview

Week 2 discussion recap (04/04/16)

Slides: download here

The Problem with Rasterization

For homework 1 part 4, we're going to be rasterizing 3D points manually, as opposed to relying on OpenGL for it. What is rasterization? It's the process of taking a 3D scene description, and converting it into 2D image coordinates so that we can draw it on screen.

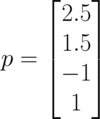

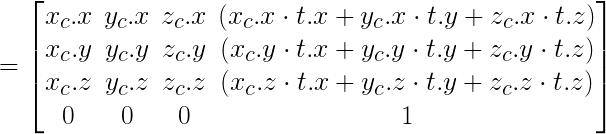

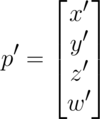

Let's say that we have a vertex p in 3d space (2.5, 1.5, -1). In homogeneous coordinates, this will be represented as follows:

Now when we try to find out what the pixel coordinates for this point would be, we immediately run into two issues:

- Our screen is a 2d coordinate system, but our point is in 3d!

- Where on the screen would (2.5, 1.5, -1) even be?

In short, we have a mismatch in coordinate systems!

How to Solve the Problem

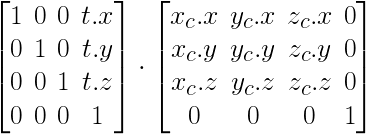

The full equation for transforming from the 3d world(technically what we call object space) to 2d pixel coordinates(image space) is described in homework 1 and reprinted below:

Let's break each of the components down here, starting from right to left.

Object Space

The original vertex p starts in object space. This is the coordinate system that is inherent to our object, in this case the vertex.

World Space

World space is the space we are in after our transformations have been applied to the object. This allows us to have multiple objects coexist, and share the same world. This is usually written as M for model.

By this point we actually already know how to do world space transformations! We've already done this in the previous parts of homework 1. When we were transforming—translating, scaling, orbiting—the cube, we were already changing from object space to world space. If you're not done with the transformation portion of the homework yet, look at the cube code below:

void Cube::spin(float deg)

{

this->angle += deg;

if (this->angle > 360.0f || this->angle < -360.0f) this->angle = 0.0f;

// This creates the matrix to rotate the cube

this->toWorld = glm::rotate(glm::mat4(1.0f), this->angle / 180.0f * glm::pi<float>(), glm::vec3(0.0f, 1.0f, 0.0f));

}

Notice how cube's spin—which you've all seen work— simply changes one member variable this->toWorld at the end? The toWorld matrix is what we call our model matrix, and it is what takes us from object space to world space(hence the name toWorld).

However, there is a problem, that we've purposefully left in, with how the spin method is modifying this->toWorld here. Did you spot it when you were working on the parts before part 4 of homework 1?

Transformation Order

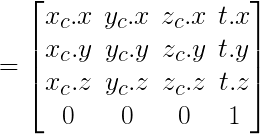

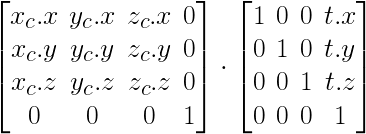

The order in which we apply our transformations to toWorld matters! Before we dive in, know that unlike words, we read matrix multiplication order from right to left.

Take a look at the following two orders.

- Translation then Rotation

- First, note how the rotation matrix is on the right side, but the sequence of order here is rotation first, then translation second. This is because we read matrix multiplication order from right to left.

- Note how the resulting matrix looks very much like a simple concatenation of the rotation and translation matrices, and how this differs from the next order's resulting matrix.

- Rotation then Translation

- Again, note how the translation matrix is on the right side, but the sequence of order is read translation first, then rotation second.

- In the resulting matrix, the translation portion of the matrix(the 4th column) looks much more complicated then the ordering from above. This is because the translation is also affected by the rotation that happened after.

So which do you think is the more desirable order of multiplying things?

Camera Space

Camera Space describes how the world looks in relation to the camera, or said differently the world with the camera as the reference point. This is usually written as C for Camera. An important point to note is that we actually require the inverse of C.

Why do we need the inverse? Good question! Think about moving the camera in the positive direction by 20. If we think about that camera as our reference point, to the camera it would seem that the other objects of the world are moving in the negative direction by 20, or in other words, the inverse direction of the camera's translation. Remember that we're applying these transformations to p, our vertex, which is an object of the world. Hence, we need to apply the inverse transformation of the camera in our rasterization equation.

So how do we create this matrix? Well, lets first see how we did it in OpenGL. If you look at Window.cpp's Window::resize_callback you'll find the line:

// Move camera back 20 units so that it looks at the origin (or else it's in the origin) glTranslatef(0, 0, -20);

This is exactly the example I gave above. glTranslatef moves the objects of the world. So now we can imagine that our camera is actually in (0, 0, 20). We call this vector e for eye. The direction that the camera is facing is called d. Usually this will be set to the origin (0, 0, 0). Finally, the camera needs to know where up is, to complete the three basis vectors of the space. This is in most cases a normal pointing towards positive Y: (0, 1, 0). Using these three vectors, we can actually consult the convenient glm::lookAt function. You can use this function like such:

glm::mat4 C_inverse = glm::lookAt(e, d, up);

Consult the lecture if you want to know what creating this matrix manually looks like. It will be important to know!

Projection Space

Projection space is what the world looks like after we've applied some projection that warps the world into something closer to our human visual system. There are multiple possibilities for the projections we can use, but we're going to stick with perspective projection for most of 3d graphics in this class. Perspective projection makes our world look warped such that closer objects seem larger, and further objects seem smaller.

So how is the perspective transformation constructed? Let's again look at how OpenGL does it. Look at Window.cpp's Window::resize_callback and you'll find the line:

// Set the perspective of the projection viewing frustum gluPerspective(60.0, (double)width / (double)height, 1.0, 1000.0);

This sets the FOV(field of view) to 60.0 degrees, aspect ratio to width / height, near plane to 1.0, far plane to 1000.0. Much like the inverse camera matrix, glm provides a convenient function for us to create an equivalent matrix:

glm::mat4 P = glm::glm::perspective(glm::radians(60.0f), (float) width / (float) height, 1.0f, 1000.0f);

Consult the lecture if you want to know what creating this matrix manually looks like. It will be important to know!

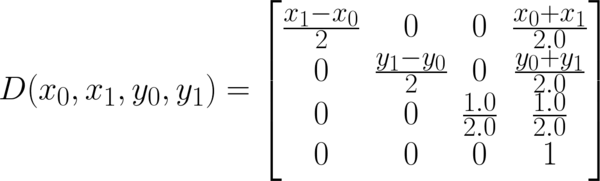

Image Space

Finally, we're at the matrix that takes a 3d point and gives us 2d pixel coordinates! Almost there.

This is the one matrix that we have that glm won't conveniently create for us. So we'll have to directly create ourselves using the following formula:

The end result of multiplying this is the final p'.

However, this is not the end, as we need to normalize the x' and y' to find the actual pixel coordinates. Therefore, our pixel coordinates will be (x'/w', y'/w').

Some Coding Tips

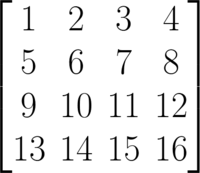

What is Column vs. Row Major?

OpenGL and glm both expect matrices to be in column major form. What does this mean?

If we write out a 4 by 4 matrix typically, it is in row major form:

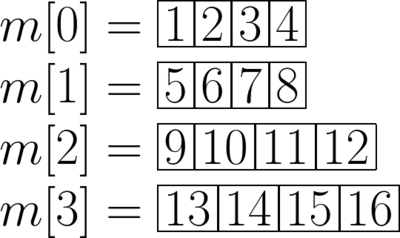

If this matrix was stored in m[4][4] in row major form, the contiguous memory layout would look like such:

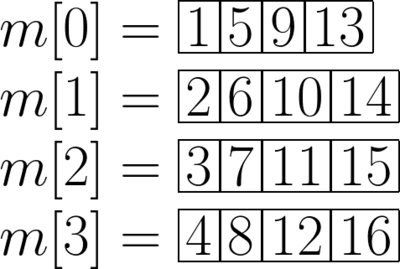

Now for legacy reasons, OpenGL and consequently glm lays it memory in column major. The memory access in column major looks like such:

If you're manually creating matrices for use in OpenGL and glm, beware and make sure you're setting it up in column major! One nice thing we would like to point out about column major is that m[3] gives us the last column, which is exactly the translation component of a transformation matrix. This lets us extract the position of an object very quickly.

Make Utility Functions

The way we'll draw a point on screen is using the pixels array. Manually indexing into this pixels array is tedious and could be error prone. We recommend that you write a utility function that will make this easier.

void drawPoint(int x, int y, float r, float g, float b) {

//At the index [x][y] of our pixel buffer, let’s set our color to be r, g, b

int offset = y*width*3 + x*3;

pixels[offset] = r;

pixels[offset+1] = g;

pixels[offset+2] = b;

}

Can you find any more opportunities for commonly abstracting utility functions like this?

How Are We Drawing the Pixels After Rasterization?

Good of you to be curious! As long as you fill in the pixels buffer correctly, we take care of that but if you want to know, here's how we send off the pixels array into OpenGL in the displayCallback function of Rasterizer.cpp.

// glDrawPixels writes a block of pixels to the framebuffer

glDrawPixels(window_width, window_height, GL_RGB, GL_FLOAT, pixels);

The glDrawPixels function takes in the pixels array and asks the GPU to draw it all in one big plop. For those of you that are curious here's what each of the parameters do:

-

window_width, window_height: OpenGL needs to know this so that it doesn't access data out of bounds of the pixels. -

GL_RGB: This specifies that the pixels array is in the formatrgbrgbrgb...in contiguous memory. If we wanted to use transparency, a mode such as GL_RGBA might be used. -

GL_FLOAT: This specifies that the pixels array is an array of floats, as opposed to doubles or ints. -

pixels: The pixels array itself!

To New or Not To New

Foo bar = Foo();

|

Foo *bar = new Foo();

|

|---|---|

|

|

|

|

|

|

|

If you decided to use the left hand method, you'll notice that the ~Foo destructor is called on the temporary Foo object, which can make any dynamically allocated memory that bar needs not exist.

Do Not Load Your OBJs every frame

If you load your OBJs every frame, your framerate will suffer, when it doesn't need to! Only build each OBJObject once instead of trying to build it every frame as that's wasteful.

Also think about what other optimizations you could perform for your program.

Avoiding Hairy Ifs

Instead of having multiple if statements everywhere for differentiating between cube, bunny, bear, or dragon, think about having a purely virtual Drawable class and pointer(or even a OBJObject * if you're a little lazy) that only needs to be swapped in one function.