Difference between revisions of "Projects"

From Immersive Visualization Lab Wiki

(→Blood Flow (Yuri Bazilevs, Jurgen Schulze, 2009)) |

|||

| Line 2: | Line 2: | ||

| − | ===[[TelePresence]] (Seth Rotkin, 2010)=== | + | ===[[TelePresence]] (Seth Rotkin, Mabel Zhang, 2010-)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| Line 11: | Line 11: | ||

<hr> | <hr> | ||

| − | ===PhotosynthVR (Sasha Koruga, 2009)=== | + | ===PhotosynthVR (Sasha Koruga, Haili Wang, Phi Nguyen, Velu Ganapathy; 2009-)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| Line 20: | Line 20: | ||

<hr> | <hr> | ||

| − | ===Blood Flow (Yuri Bazilevs, Jurgen Schulze, Alison Marsden, Ming-Chen Hsu, Kenneth Benner, Sasha Koruga 2009)=== | + | ===Blood Flow (Yuri Bazilevs, Jurgen Schulze, Alison Marsden, Ming-Chen Hsu, Kenneth Benner, Sasha Koruga; 2009-)=== |

<table> | <table> | ||

<tr> | <tr> | ||

<td>[[Image:VelocityVectorField-small.png]]</td> | <td>[[Image:VelocityVectorField-small.png]]</td> | ||

<td>In this project, we are working on visualizing the blood flow in an artery, as simulated by Professor Bazilev at UCSD. Read the [[Blood Flow Manual]] for usage instructions.<br>Videos and pictures of the visualizations in 2D can be found [http://acsweb.ucsd.edu/~skoruga/bloodflow here], and the corresponding iPhone versions of the videos can be downloaded [[Media:iphone_videos.zip|here]].</td> | <td>In this project, we are working on visualizing the blood flow in an artery, as simulated by Professor Bazilev at UCSD. Read the [[Blood Flow Manual]] for usage instructions.<br>Videos and pictures of the visualizations in 2D can be found [http://acsweb.ucsd.edu/~skoruga/bloodflow here], and the corresponding iPhone versions of the videos can be downloaded [[Media:iphone_videos.zip|here]].</td> | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

</tr> | </tr> | ||

</table> | </table> | ||

| Line 47: | Line 38: | ||

<hr> | <hr> | ||

| − | ===PanoView360 (Andrew Prudhomme, 2008)=== | + | ===PanoView360 (Andrew Prudhomme, 2008-)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| Line 56: | Line 47: | ||

<hr> | <hr> | ||

| − | ===SUN Blackbox (Mabel Zhang, Andrew Prudhomme, Seth Rotkin, Philip Weber, 2008)=== | + | ===SUN Blackbox (Mabel Zhang, Andrew Prudhomme, Seth Rotkin, Philip Weber, 2008-)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| Line 65: | Line 56: | ||

<hr> | <hr> | ||

| − | === | + | ===Khirbat en-Nahas (Kyle Knabb, Jurgen Schulze, 2008)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:khirbit.jpg]]</td> |

| − | <td> | + | <td>For the past ten years, a joint University of California, San Diego and Department of Antiquities of Jordan research team led by Professor Tom Levy and Dr. Mohammad Najjar has been investigating the role of mining and metallurgy on social evolution from the Neolithic period (ca. 7500 BC) to medieval Islamic times (ca. 12th century AD). Kyle Knabb has been working with the IVL as a master's student under Professor Thomas Levy from the archaeology department. He created a 3D visualization for the StarCAVE which displays several excavation sites in Jordan, along with artifacts found there, and radio carbon dating sites. In a [[Digital Archaeology|related project]] we acquired stereo photography from the excavation site in Jordan.</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | === | + | ===Spatialized Sound (Toshiro Yamada, Suketu Kamdar, 2008)=== |

<table> | <table> | ||

<tr> | <tr> | ||

<td>[[Image:image-missing.jpg]]</td> | <td>[[Image:image-missing.jpg]]</td> | ||

| − | <td> | + | <td>Peter Otto's group created a 3D sound system which we can control from the StarCAVE by positioning a sound source in a 3D environment. In our demonstration, the sound source is represented as a cone and can be placed in the pre-function area on the 1st floor of the virtual Calit2 building.</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | === | + | ===Video in Virtual Environments (Han Kim, 2008-)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:mipmap-video.jpg]]</td> |

| − | <td> | + | <td>Computer Science graduate student Han Kim has developed an efficient "mip-map" algorithm that "shrinks" high-resolution video content so that it can be played interactively in VEs. He has also created several optimization solutions for sustaining a stable video playback frame rate when the video is projected onto non-rectangular VE screens.</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | === | + | ===[[Mipmapped_Volume_Rendering|Multi-Volume Rendering]] (Han Kim, 2009)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:Volume_rendering.jpg]]</td> |

| − | <td> | + | <td>The goal of multi-volume rendering is to visualize multiple volume data sets. Each volume has three or more channels.</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | === | + | ===[[CineGrid]] (Leo Liu, 2007)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:fountain-code.jpg]]</td> |

| − | <td>Computer | + | <td>Computer science graduate student Leo Liu has been working on new algorithms for long distance 4k video streaming, as well as 4k movie data management. He has been working in close collaboration with the iRods group at the San Diego Supercomputer Center (SDSC) and has helped create iRods servers for CineGrid content in San Diego, Amsterdam, and Keio University in Japan.</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | === | + | ===Neuroscience and Architecture (Daniel Rohrlick, Michael Bajorek, Mabel Zhang, 2007)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:neuroscience.jpg]]</td> |

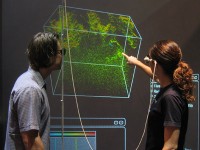

| − | <td> | + | <td>This projects started off as a Calit2 seed funded project to do a pilot study with the Swartz Center for Neuroscience in which a human subject has to find their way to specific locations in the Calit2 building while their brain waves are being scanned by a high resolution EEG. Michael's responsibility was the interface between the StarCAVE and the EEG system, to transfer tracker data and other application parameters to allow for the correlation of EEG data with VR parameters. Daniel created the 3D model of the New Media Arts wing of the building using 3ds Max. Mabel refined the Calit2 building geometry. Recently, HMC was generous enough to fund our future efforts in this project.</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | === | + | ===[[OssimPlanet]] (Philip Weber, Jurgen Schulze, 2007)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:ossimplanet.jpg]]</td> |

| − | <td> | + | <td>In this project we ported the open source OssimPlanet library to COVISE, so that it can run in our VR environments, including the Varrier tiled display wall and the StarCAVE.</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | ===[[ | + | ===[[Protein Visualization]] (Philip Weber, Andrew Prudhomme, Krishna Subramanian, Sendhil Panchadsaram, 2005)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:topsan.jpg]]</td> |

| − | <td> | + | <td>A VR application to view protein structures from UCSD Professor [http://www.sdsc.edu/~bourne/ Philip Bourne's] [http://www.rcsb.org/pdb/ Protein Data Bank (PDB)]. The popular molecular biology toolkit PyMol is used to create the 3D models of the PDB files. Our application also supports protein alignment, an aminoacid sequence viewer, integration of TOPSAN annotations, as well as a variety of visualization modes. Among the users of this application are: UC Riverside (Peter Atkinson), UCSD Pharmacy (Zoran Radic), Scripps Research Institute (James Fee/Jon Huntoon).</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | === | + | ===VOX and Virvo (Jurgen Schulze, 1999)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:deskvox.jpg]]</td> |

| − | <td> | + | <td>Ongoing development of real-time volume rendering algorithms for interactive display at the desktop (DeskVOX) and in virtual environments (CaveVOX). Virvo is name for the GUI independent, OpenGL based volume rendering library which both DeskVOX and CaveVOX use.<td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | === | + | <p> |

| + | |||

| + | =<b>Inactive Projects</b>= | ||

| + | |||

| + | ===How Much Information (Andrew Prudhomme, 2008-2009)=== | ||

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:hmi.jpg]]</td> |

| − | <td> | + | <td>In this project we visualize the data from various collaborating companies which provide us with data stored on harddisks or data transferred over networks. In the first stage, Andrew created an application which can display the directory structures of 70,000 harddisk drives of Microsoft employees, sampled over the course of five years. The visualization uses an interactive hyperbolic 3D graph to visualize the directory trees and to compare different users' trees, and it uses various novel data display methods like wheel graphs to display file sizes, etc. More information about this project can be found at [http://hmi.ucsd.edu/].</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | ===[[ | + | ===[[Hotspot Mitigation]] (Jordan Rhee, 2008-2009)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:hotspot.png]]</td> |

| − | <td> | + | <td>ECE Undergraduate student Jordan Rhee has been working on algorithms for hotspot mitigation in projected virtual reality environments such as the StarCAVE. His COVISE plugin is based on a GLSL shader which performs the mitigation in real-time, minimizing the performance impact on the application.</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | === | + | ===ATLAS in Parallel (Ruth West, Daniel Tenedorio, Todd Margolis, 2008-2009)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:image-missing.jpg]]</td> |

| − | <td> | + | <td>UCSD graduate Daniel Tenedorio is working on parallelizing the simulation algorithm of the Atlas in Silico art piece, supported by an NSF funded SGER grant. Daniel's goal is to be able to support the entire CAMERA data set, consisting of 17 million data points (open reading frames). Daniel is going to use the CUDA architecture of NVidia's graphics cards to achieve a considerable performance increase.</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

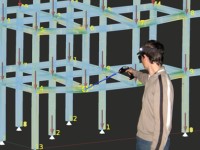

| − | === | + | ===6DOF Tracking with Wii Remotes (Sage Browning, Philip Weber, 2008)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:image-missing.jpg]]</td> |

| − | <td> | + | <td>Past research at Calit2 has developed an affordable means of creating large high-resolution computer displays, the OptiPortals. Contemporary input devices for tiled display walls, which go beyond the capabilities a desktop mouse can offer, are either very expensive, or less than satisfactory. Some controllers can cost $40,000, which defeats the benefits of the relatively inexpensive OptiPortal. Alternately, currently available inexpensive methods leave much to be desired: the same image output on the tiled display wall is also shown on a standard sized control monitor using a standard mouse as input, leaving the user without a means of direct interface with the OptiPortal. In order to create an improved interface for the OptiPortal, research must be done in the area of 3D location tracking that is accurate to within a few millimeters, yet maintains a reasonable cost of production and an intuitive design. The focus of this project is to use multiple Wii Remotes for six degree of freedom tracking. We are going to integrate this new input device scheme into the COVISE software, so that existing software applications can directly benefit from this research.</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | ===[[ | + | ===[[LOOKING|LOOKING/ORION]] (Philip Weber, 2007-2009)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:orion.jpg]]</td> |

| − | <td> | + | <td>Under the direction of Matt Arrott, Philip created an interactive 3D exploration tool for simulated ocean currents in Monterey Bay. The software uses OssimPlanet to display terrain and bathymetry of the area to give context to streamlines and iso-surfaces of the vector field representing the ocean currents.</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | === | + | ===[[Virtual Calit2 Building]] (Daniel Rohrlick, Mabel Zhang, 2006-2009)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:calit2-building.jpg]]</td> |

| − | <td> | + | <td>The goal the Virtual Calit2 Building is to provide an accurate and accessible virtual replica of the Calit2 building that can be used by any researcher or scientist to conduct projects or tests that require the need for a detailed architectural layout.</td> |

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | < | + | ===[[Interaction with Multi-Spectral Images]] (Philip Weber, Praveen Subramani, Andrew Prudhomme, 2006-2009)=== |

| − | + | <table> | |

| − | + | <tr> | |

| + | <td>[[Image:adoration.jpg]]</td> | ||

| + | <td>In this project, a versatile high resolution image viewer was created which allows loading multi-gigabyte size image files to display in the StarCAVE and on tiled display walls. Images can consist of multiple layers, like Maurizio Seracini's multi-spectral painting scans. The "Walking into a DaVinci Masterpiece" demonstration in the auditiorium uses this COVISE module. Other images we can display with this technique are the Golden Gate Bridge, an USGS map of La Jolla, NCMIR's mouse cerebellum, the Brain Observatory's monkey brain, and cancer cells from Andy Kummel's lab.</td> | ||

| + | </tr> | ||

| + | </table> | ||

| + | <hr> | ||

===Finite Elements Simulation (Fabian Gerold, 2008-2009)=== | ===Finite Elements Simulation (Fabian Gerold, 2008-2009)=== | ||

Revision as of 00:09, 17 November 2010

Active Projects

TelePresence (Seth Rotkin, Mabel Zhang, 2010-)

PhotosynthVR (Sasha Koruga, Haili Wang, Phi Nguyen, Velu Ganapathy; 2009-)

|

UCSD Sasha Koruga has created a Photosynth-like system with which he can display a number of photographs in the StarCAVE. The images appear and disappear as the user moves around the photographed object. Read the PhotosynthVR Manual for usage instructions. |

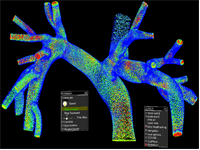

Blood Flow (Yuri Bazilevs, Jurgen Schulze, Alison Marsden, Ming-Chen Hsu, Kenneth Benner, Sasha Koruga; 2009-)

|

In this project, we are working on visualizing the blood flow in an artery, as simulated by Professor Bazilev at UCSD. Read the Blood Flow Manual for usage instructions. Videos and pictures of the visualizations in 2D can be found here, and the corresponding iPhone versions of the videos can be downloaded here. |

Animated Point Clouds (Daniel Tenedorio, Rachel Chu, Sasha Koruga, 2008)

PanoView360 (Andrew Prudhomme, 2008-)

|

In collaboration with Professor Dan Sandin from EVL, Andrew created a COVISE plugin to display photographer Richard Ainsworth's panoramic stereo images in the StarCAVE and the Varrier. |

SUN Blackbox (Mabel Zhang, Andrew Prudhomme, Seth Rotkin, Philip Weber, 2008-)

|

Mabel Zhang has been working on 3D modeling tasks at IVL. Her first task was to model the Calit2 building, which she completed as a Calit2 summer intern. We then hired her as a student worker to create a 3D model of the SUN Mobile Data Center which is a core component of the instrument procured by the GreenLight project. Andrew added an on-line connection to the Blackbox at UCSD to display the output of the power modules. Their project was demonstrated at the Supercomputing Conference 2008 in Austin, Texas. |

Khirbat en-Nahas (Kyle Knabb, Jurgen Schulze, 2008)

|

For the past ten years, a joint University of California, San Diego and Department of Antiquities of Jordan research team led by Professor Tom Levy and Dr. Mohammad Najjar has been investigating the role of mining and metallurgy on social evolution from the Neolithic period (ca. 7500 BC) to medieval Islamic times (ca. 12th century AD). Kyle Knabb has been working with the IVL as a master's student under Professor Thomas Levy from the archaeology department. He created a 3D visualization for the StarCAVE which displays several excavation sites in Jordan, along with artifacts found there, and radio carbon dating sites. In a related project we acquired stereo photography from the excavation site in Jordan. |

Spatialized Sound (Toshiro Yamada, Suketu Kamdar, 2008)

Video in Virtual Environments (Han Kim, 2008-)

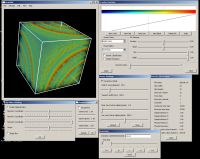

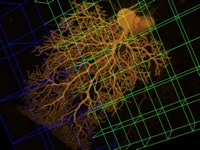

Multi-Volume Rendering (Han Kim, 2009)

|

The goal of multi-volume rendering is to visualize multiple volume data sets. Each volume has three or more channels. |

CineGrid (Leo Liu, 2007)

Neuroscience and Architecture (Daniel Rohrlick, Michael Bajorek, Mabel Zhang, 2007)

OssimPlanet (Philip Weber, Jurgen Schulze, 2007)

|

In this project we ported the open source OssimPlanet library to COVISE, so that it can run in our VR environments, including the Varrier tiled display wall and the StarCAVE. |

Protein Visualization (Philip Weber, Andrew Prudhomme, Krishna Subramanian, Sendhil Panchadsaram, 2005)

|

A VR application to view protein structures from UCSD Professor Philip Bourne's Protein Data Bank (PDB). The popular molecular biology toolkit PyMol is used to create the 3D models of the PDB files. Our application also supports protein alignment, an aminoacid sequence viewer, integration of TOPSAN annotations, as well as a variety of visualization modes. Among the users of this application are: UC Riverside (Peter Atkinson), UCSD Pharmacy (Zoran Radic), Scripps Research Institute (James Fee/Jon Huntoon). |

VOX and Virvo (Jurgen Schulze, 1999)

Inactive Projects

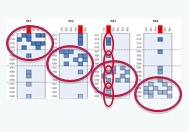

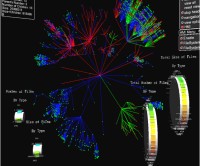

How Much Information (Andrew Prudhomme, 2008-2009)

|

In this project we visualize the data from various collaborating companies which provide us with data stored on harddisks or data transferred over networks. In the first stage, Andrew created an application which can display the directory structures of 70,000 harddisk drives of Microsoft employees, sampled over the course of five years. The visualization uses an interactive hyperbolic 3D graph to visualize the directory trees and to compare different users' trees, and it uses various novel data display methods like wheel graphs to display file sizes, etc. More information about this project can be found at [1]. |

Hotspot Mitigation (Jordan Rhee, 2008-2009)

ATLAS in Parallel (Ruth West, Daniel Tenedorio, Todd Margolis, 2008-2009)

6DOF Tracking with Wii Remotes (Sage Browning, Philip Weber, 2008)

LOOKING/ORION (Philip Weber, 2007-2009)

Virtual Calit2 Building (Daniel Rohrlick, Mabel Zhang, 2006-2009)

Interaction with Multi-Spectral Images (Philip Weber, Praveen Subramani, Andrew Prudhomme, 2006-2009)

Finite Elements Simulation (Fabian Gerold, 2008-2009)

Palazzo Vecchio (Philip Weber, 2008)

Virtual Architectural Walkthroughs (Edward Kezeli, 2008)

NASA (Andrew Prudhomme, 2008)

Digital Lightbox (Philip Weber, 2007-2008)

Research Intelligence Portal (Alex Zavodny, Andrew Prudhomme, 2007-2008)

New San Francisco Bay Bridge (Andre Barbosa, 2007-2008)

Birch Aquarium (Daniel Rohrlick, 2007-2008)

CAMERA Meta-Data Visualization (Sara Richardson, Andrew Prudhomme, 2007-2008)

Depth of Field (Karen Lin, 2007)

HD Camera Array (Alex Zavodny, Andrew Prudhomme, 2007)

Atlas in Silico for Varrier (Ruth West, Iman Mostafavi, Todd Margolis, 2007)

Screen (Noah Wardrip-Fruin, 2007)

|

Under the guidance of Noah Wardrip-Fruin and Jurgen Schulze, Ava Pierce, David Coughlan, Jeffrey Kuramoto, and Stephen Boyd are adapting the multimedia art installation Screen from the four-wall cave system at Brown University to the StarCAVE. This piece was displayed at SIGGRAPH 2007 and was the first virtual reality application to demoed in the StarCAVE. It was also displayed at the Beall Center at UC Irvine in the fall of 2007. For this purpose, it was ported to a single stereo wall display. |

Children's Hospital (Jurgen Schulze, 2007)

|

From our collaboration with Dr. Peter Newton from San Diego's Children's Hospital we have a few computer tomography (CT) data sets of childerens' upper bodies, showing irregularities of their spines. |

Super Browser (Vinh Huynh, Andrew Prudhomme, 2006)

Cell Structures (Iman Mostafavi, 2006)

Terashake Volume Visualization (Jurgen Schulze, 2006)

|

As part of the NSF funded Optiputer project, Jurgen visualized part of the 4.5 terabyte TeraShake earthquake data set on a the 100 megapixel LambdaVision display at Calit2. For this project, he integrated his volume visualization tool VOX into EVL's SAGE. |

Earthquake Visualization (Jurgen Schulze, 2005)

|

Along with Debi Kilb from the Scripps Institution of Oceanography (SIO) we visualized 3D earthquake locations on a world-wide scale. |