Project4S21

Contents |

CAVE Simulator

In this project you will need to create a VR application, which simulates a virtual reality CAVE.

This project is an individual project (no team work allowed).

In the discussions we're going to go over how to do off-center projection and off-screen rendering with Unity in greater detail.

Resources:

- Off-screen rendering with Unity is explained here.

- Here is a Unity script for off-screen rendering.

- This link provides a great explanation (including source code) of how to create an off-center projection matrix as you will need it in this project. This is the method we use in discussion.

- The original SIGGRAPH paper on the CAVE from 1993 provides an in-depth explanation of CAVE technology, including as part of it off-axis projection.

- Here are a few videos that illustrate what a CAVE experience looks like: [1], [2], [3]

Starter Code

We recommend that you start with your code for project 3.

Project Description (100 Points)

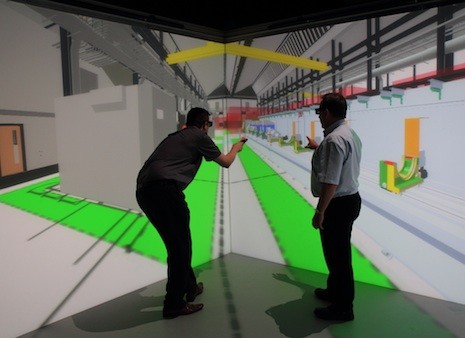

This picture shows what a 3-sided virtual reality CAVE can look like:

Looking closely at the image, you will find that the yellow cross bar at the top does not look straight in the image, although it does to the primary user. In the image it is bent right where the edge between the two vertical screens is. This is typical behavior of VR CAVE systems, as they normally render the images for one head-tracked viewer. All other viewers see a usable image, but it can be distorted.

In this project you are tasked with creating a simulator for a 3-sided VR CAVE. This means that you need to create a virtual CAVE, which you can view with the Oculus Rift. In this CAVE you will need to display a sky box and the cube from project 3. You will need to be able to switch between the view from the user's head and a view from one of the VR controllers.

The virtual CAVE should consist of three display screens. Each screen should be square with a width of 2.4 meters (roughly 8 feet). One of the screens should be on the floor, the other two, left and right, should be vertical and seamlessly attached to the screen on the floor and to each other at right angles, just like in the picture. This application should be used standing, with the viewpoint set to your height so that it appears as if you're standing on the floor screen. The initial user position should be with their feet in the center of the floor screen, facing the edge between the two vertical screens.

In this position, the user should see the cube from project 3. The sky box should also be visible. Use the stereo sky box from project 3.

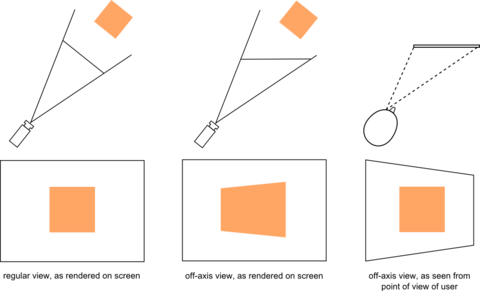

The big difference between this project and project 3 is that you need to render cube and sky box to the simulated CAVE walls, instead of directly to the VR display. You need to do this by rendering the scene six times to off-screen buffers. Six times because there are three displays, and you need to render each separately from each eye position (left and right eye). To render from the different eye positions of a head-tracked user, you are going to need to render with an asymmetrical projection matrix. This picture illustrates the skewed projection pyramids originating from the two eyes:

The pictures below illustrate what an off axis view looks like on the screen and from the user's point of view:

Head-in-Hand Mode: To see the effect of rendering from the point of view of another user, you need to be able to switch the head position to one of the controllers. The switch should happen when the right controller's trigger is pulled, and the viewpoint should remain at the controller and update while the controller is moving, until the trigger is released. When the trigger is released, the viewpoint should switch back to the user's actual head position. Note that because we're rendering in stereo, you'll need to create two camera (=eye) positions at the controller, one offset to the left, one to the right by half the average human eye distance, which is 65 millimeters. When switching the viewpoint, this should only affect the viewpoint used for rendering on the CAVE displays. The HMD's viewpoint should always be tracked from its correct position for a view of the space the CAVE is in. Note that rotations of the controller should not rotate the scene, but because the eye/camera positions rotate about a common center point, the stereo effect on the CAVE walls should change: a 180 degree rotation should invert the stereo effect.

Freeze Mode: We also need a way to freeze the viewpoint the CAVE renders from, regardless of whether it's rendering from head or controller location. For this we'll use the 'B' button. When it is pressed, the viewpoint the CAVE renders from should freeze. When the 'B' button is pressed again, the view should unfreeze. In normal mode, when your head position changes, the perspective of the image will change, so you will always be looking at the stereo image in the correct position. In freeze mode, the images on each wall will be static until you unfreeze it. It will be like wallpapers attached onto the three CAVE walls.

Debug Mode: To help with debugging, when you've switched the viewpoint to the controller, you should enable debug mode with the 'A' button (activate debug mode while 'A' is depressed, disable upon release). In debug mode, the user sees not only the images on the virtual CAVE screens, but also all six viewing pyramids (technically they're sheared pyramids). These pyramids start in each of the eye positions and go to the corners of each of the screens. Three screens times two eyes is six pyramids. You can visualize the pyramids in wireframe mode with lines outlining them, or use solid surfaces. In either case, you should draw those pyramids that indicate the view from the left eye in green, those from the right eye should be red. You need to render the debug pyramids in headset coordinates, whereas the cube and the sky box need to be rendered in CAVE coordinates.

The difference between the two coordinate spaces is that what you render in headset space can be rendered directly to your HMD screens, whereas everything in CAVE space needs to be rendered to the off-screen buffers.

Grading

You'll get points for the following milestones. We recommend working on them in the sequence given below.

- Create CAVE geometry: 3 squares: 5 points

- Render the cube to one the CAVE walls in mono using an off screen buffer, from a fixed viewpoint. Place the cube so it is between the user and the CAVE walls: 20 points

- Render the scene with the sky box in mono to the CAVE walls, from a fixed viewpoint: 10 points

- Render scene to one CAVE wall in mono from the user's eyes (need to use head tracking and off-center projection math): 20 points

- Render scene to one CAVE wall in stereo from user's eyes: 10 points

- Render scene to two CAVE walls in stereo from user's eyes: 5 points

- Render scene to all three CAVE screens in stereo from user's eyes: 5 points

- Ability to switch viewpoint to controller with the trigger: 5 points

- Allow freezing/unfreezing the viewpoint with the 'B' button: 5 points

- Add the wire frame debugging functionality to the 'A' button: 15 points

Grading:

- -5 if off-center projection "almost" works: only issue is that images don't align between CAVE walls, but head tracking works

Notes:

- You need to always render stereo images to the HMD. When the above milestone list mentions rendering in mono, it means that the image on the CAVE wall can be monoscopic, but the HMD should still render that image separately from each eye point's perspective.

- It can be helpful to render the sphere from project 1 at each controller position, in CAVE space, to make sure it renders in the right place.

- Many of the milestones are cumulative, for instance, if you show us that you can render a stereo image to a screen you don't also have to show us that you can render mono to get those points.

Extra Credit (10 points max.)

You can choose between the following options for extra credit. If you work on multiple options, their points will add up but will not exceed 10 points in total. For each option, assign a button on one of the controllers to turn the option on or off.

- Assume that the CAVE is driven by two projectors for each wall (one for each eye) using passive polarized stereo. Simulate the failure of one projector (just one eye, not an entire wall): button press disables random projector, which means you render an all black square for that eye on that wall. (3 points)

- Simulate the virtual CAVE more realistically by implementing one or more of the options below.

- Brightness falloff on LCD screens: the brightness of the pixels on an LCD screens depends on the angle the user looks at the screen from. When directly in front of the screen, the screen is brightest. When looking from a steep angle, it is darkest. Use this simplified model to simulate the brightness falloff for an LCD display based CAVE: calculate the angle between the vector from the viewer (eye point) to the center of each screen, and the normal to the screen. If the angle is zero, render the image unmodified. For angles between 0 and 90 degrees, reduce the brightness of the image to zero (black) proportionally to the angle. Reducing the brightness of the texture can be done by reducing the brightness of the polygon it's mapped to. (5 points) You get an additional 5 points if you do this on a per pixel basis: calculate the angle from eye to each pixel separately and reduce the pixel's brightness accordingly, in an HLSL shader.

- Vignetting on projected screens: projection screens aren't illuminated homogeneously across their surface, but they are brightest where the projector lamp is and fall off towards the edges. Assume the projectors are in the optical axis of the walls (ie, centered) at 2.4 meters distance behind the screens. Vignetting is a circular effect. Render the pixels at normal brightness in the spot that's where the line from eye to projector intersects the screen. Then make the brightness fall off linearly as the angle to the pixels decreases. You'll have to use an HLSL shader like in the extended version of problem 4.1 because the brightness reduction is different for each pixel. (10 points)

- Linear polarization effect in passive stereo CAVE: assume the virtual CAVE was built with linearly polarizing projectors and glasses. Linear polarization filters work best only when they're oriented the same as the filter in the projector. When you turn the glasses, they will switch the polarization axis every 90 degrees, and only partly filter the light in-between. For each combination of screen and eye, calculate the angle the eye is oriented compared to the screen. Left and right eye should have opposite polarization (one has vertical, the other horizontal polarization). As the user's head turns you need to calculate the angle between the head and each screen, and linearly interpolate the blending factors for each image. When rendering the screens you need to blend left and right eye image, each with its blending factor. (10 points)

Submission Instructions

Once you are done implementing the project, record a video demonstrating all the functionality you have implemented, just like for projects 1-3. Below we are repeating those instructions.

The video should be no longer than 5 minutes, and can be substantially shorter. The video format should ideally be MP4, but any other format the graders can view will also work.

While recording the video, record your voice explaining what aspects of the project requirements are covered.

In this project, since some of the components requires rendering different images to both eyes, you need to use this tool to record videos off from both Oculus Quest's screens. Record Oculus Quest using scrcpy

- On any platform, you should be able to use Zoom to record a video.

- For Windows:

- Windows 10 has a built-in screen recorder

- If that doesn't work, the free OBS Studio is very good.

- On Macs you can use Quicktime.

If you can connect your headset to your computer with a video cable and use a Windows computer, you can use the Compositor Mirror tool, which is located on your computer in in C:\Program Files\Oculus\Support\oculus-diagnostics\OculusMirror.exe It's important that you record both the left and the right eye.

Components of your submission:

- Video: Upload the video at the Assignment link on Canvas. Also add a text comment stating which functionality you have or have not implemented and what extra credit you have implemented. If you couldn't implement something in its entirety, please state which parts you did implement and expect to get points for.

- Example 1: I've done the base project with no issues. No extra credit.

- Example 2: Everything works except an issue with x: I couldn't get y to work properly.

- Example 3: Sections 1, 2 and 4 are fully implemented.

- Example 4: The base project is complete and I did z for extra credit.

- Source code: Upload your Unity project to Canvas: Create a .zip file of your Unity project and submit it to Canvas. You can reduce the size of this file by hosting your project on Github with a .gitignore file for Unity, then downloading your project as a .zip file directly from Github.

- Executable: If the .zip file with your Unity project includes the executable of your app you are done. Otherwise, build your Unity project into an Android .apk, Windows .exe file or the Mac equivalent and upload it to Canvas as zip file.