|

|

| (246 intermediate revisions by 24 users not shown) |

| Line 1: |

Line 1: |

| − | =<b>Active Projects</b>= | + | =<b>Most Recent Projects</b>= |

| | | | |

| − | ===[[3D Reconstruction of Photographs ]] (Matthew Religioso, 2011-)=== | + | ===Immersive Visualization Center (Jurgen Schulze, 2020-2021)=== |

| | <table> | | <table> |

| | <tr> | | <tr> |

| − | <td>[[Image:Wiki.jpg]]</td> | + | <td>[[Image:IVC-Color-Logo-01.png|250px]]</td> |

| − | <td width=20></td> | + | <td>Dr. Schulze founded the [http://ivc.ucsd.edu Immersive Visualization Center (IVC)] to bring together immersive visualization researchers and students with researchers from research domains which have a need for immersive visualization using virtual reality or augmented reality technologies. The IVC is located at UCSD's [https://qi.ucsd.edu/ Qualcomm Institute]. |

| − | <td>Reconstruct static objects from photographs into 3D models using the Bundler Algorithm and the Texturing Algorithm developed by prior students. This project's goal is to optimize the Texturing to maximize photorealism and efficiency, and run the resulting application in the StarCAVE. </td>

| + | </td> |

| − | <td width=20></td> | + | |

| | </tr> | | </tr> |

| | </table> | | </table> |

| | <hr> | | <hr> |

| | | | |

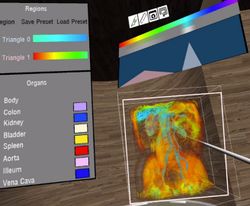

| − | ===[[Real-Time Geometry Scanning System]] (Daniel Tenedorio, 2011-)=== | + | ===3D Medical Imaging Pilot (Larry Smarr, Jurgen Schulze, 2018-2021)=== |

| | <table> | | <table> |

| | <tr> | | <tr> |

| − | <td>[[Image:dtenedor-wiki-icon.png]]</td> | + | <td>[[Image:3dmip.jpg|250px]]</td> |

| − | <td width=20></td> | + | <td>In this [https://ucsdnews.ucsd.edu/feature/helmsley-charitable-trust-grants-uc-san-diego-4.7m-to-study-crohns-disease $1.7M collaborative grant with Dr. Smarr] we have been working on developing software tools to support surgical planning, training and patient education for Crohn's disease.<br> |

| − | <td>This interactive system constructs a 3D model of the environment as a user moves an infrared geometry camera around a room. We display the intermediate representation of the scene in real-time on virtual reality displays ranging from a single computer monitor to immersive, stereoscopic projection systems like the StarCAVE.</td>

| + | '''Publications:''' |

| − | <td width=20></td>

| + | * Lucknavalai, K., Schulze, J.P., [http://web.eng.ucsd.edu/~jschulze/publications/Lucknavalai2020.pdf "Real-Time Contrast Enhancement for 3D Medical Images using Histogram Equalization"], In Proceedings of the International Symposium on Visual Computing (ISVC 2020), San Diego, CA, Oct 5, 2020 |

| | + | * Zhang, M., Schulze, J.P., [http://web.eng.ucsd.edu/~jschulze/publications/Zhang2021.pdf "Server-Aided 3D DICOM Image Stack Viewer for Android Devices"], In Proceedings of IS&T The Engineering Reality of Virtual Reality, San Francisco, CA, January 21, 2021 |

| | + | '''Videos:''' |

| | + | * [https://drive.google.com/file/d/1pi1veISuSlj00y82LfuPEQJ1B8yXWkGi/view?usp=sharing VR Application for Oculus Rift S] |

| | + | * [https://drive.google.com/file/d/1MH2L6yc5Un1mo4t37PRUTXq8ptFIbQf3/view?usp=sharing Android companion app] |

| | + | </td> |

| | </tr> | | </tr> |

| | </table> | | </table> |

| | <hr> | | <hr> |

| | | | |

| − | ===[[Real-Time Meshing of Dynamic Point Clouds]] (Robert Pardridge, James Lue, 2011-)=== | + | ===[https://www.darpa.mil/program/explainable-artificial-intelligence XAI] (Jurgen Schulze, 2017-2021)=== |

| | <table> | | <table> |

| | <tr> | | <tr> |

| − | <td>[[Image:Marchingcubes.png]]</td> | + | <td>[[Image:xai-icon.jpg|250px]]</td> |

| − | <td>This project involves generating a triangle mesh in over a point cloud that grows dynamically. The goal is to implement a meshing algorithm that is fast enough to keep up with the streaming input from a scanning device. We are using a CUDA implementation of the Marching Cubes algorithm to triangulate in real-time a point cloud obtained from the Kinect depth-camera. </td> | + | <td>The effectiveness of AI systems is limited by the machine’s current inability to explain their decisions and actions to human users. The Department of Defense (DoD) is facing challenges that demand more intelligent, autonomous, and symbiotic systems. The Explainable AI (XAI) program aims to '''create a suite of machine learning techniques that produce more explainable models''', while maintaining a high level of learning performance (prediction accuracy); and enable human users to understand, appropriately trust, and effectively manage the emerging generation of artificially intelligent partners. This project is a collaboration with the [https://www.sri.com/ Stanford Research Institute (SRI) Princeton, NJ]. Our responsibility is the development of the web interface, as well as in-person user studies, for which we recruited over 100 subjects so far.<br> |

| | + | '''Publications:''' |

| | + | * Alipour, K., Schulze, J.P., Yao, Y., Ziskind, A., Burachas, G., [http://web.eng.ucsd.edu/~jschulze/publications/Alipour2020a.pdf "A Study on Multimodal and Interactive Explanations for Visual Question Answering"], In Proceedings of the Workshop on Artificial Intelligence Safety (SafeAI 2020), New York, NY, Feb 7, 2020 |

| | + | * Alipour, K., Ray, A., Lin, X, Schulze, J.P., Yao, Y., Burachas, G.T., [http://web.eng.ucsd.edu/~jschulze/publications/Alipour2020b.pdf "The Impact of Explanations on AI Competency Prediction in VQA"], In Proceedings of the IEEE conference on Humanized Computing and Communication with Artificial Intelligence (HCCAI 2020), Sept 22, 2020 |

| | + | </td> |

| | </tr> | | </tr> |

| | </table> | | </table> |

| | <hr> | | <hr> |

| | | | |

| − | ===[[LSystems]] (Sarah Larsen 2011-)=== | + | ===DataCube (Jurgen Schulze, Wanze Xie, Nadir Weibel, 2018-2019)=== |

| | <table> | | <table> |

| | <tr> | | <tr> |

| − | <td>[[Image:LSystems2.png]]</td> | + | <td>[[Image:datacube.jpg|250px]]</td> |

| − | <td>Creates an LSystem and displays it with either line or cylinder connections</td> | + | <td>In this project for the Microsoft HoloLens we developed a [https://www.pwc.com/us/en/industries/health-industries/library/doublejump/bodylogical-healthcare-assistant.html Bodylogical]-powered augmented reality tool for the Microsoft HoloLens to analyze the health of a population such as the employees of a corporation.<br> |

| − | </tr>

| + | '''Publications:''' |

| | + | * Xie, W., Liang, Y., Johnson, J., Mower, A., Burns, S., Chelini, C., D'Alessandro, P., Weibel, N., Schulze, J.P., [http://web.eng.ucsd.edu/~jschulze/publications/Xie2020.pdf "Interactive Multi-User 3D Visual Analytics in Augmented Reality"], In Proceedings of IS&T The Engineering Reality of Virtual Reality, San Francisco, CA, January 30, 2020 |

| | + | '''Videos:''' |

| | + | * [https://drive.google.com/file/d/1Ba6uS9PFiHTh4IKcVwQWPgMGERNr-5Fd/view?usp=sharing Live demonstration] |

| | + | * [https://drive.google.com/file/d/1_DcW8RZJvUhGmYEwIbLgJ2W0lWVdsLw7/view?usp=sharing Feature summary] |

| | + | </td> </tr> |

| | </table> | | </table> |

| | <hr> | | <hr> |

| | | | |

| − | ===[[Android Controller]] (Jeanne Wang 2011-)=== | + | ===[[Catalyst]] (Tom Levy, Jurgen Schulze, 2017-2019)=== |

| | <table> | | <table> |

| | <tr> | | <tr> |

| − | <td>[[Image:androidscreenshot.png]]</td> | + | <td>[[Image:cavekiosk.jpg|250px]]</td> |

| − | <td>An Android based controller for a visualization system such as StarCave or a multiscreen grid.</td> | + | <td>This collaborative grant with Prof. Levy is a UCOP funded $1.07M Catalyst project on cyber-archaeology, titled [https://ucsdnews.ucsd.edu/pressrelease/new_3_d_cavekiosk_at_uc_san_diego_brings_cyber_archaeology_to_geisel "3-D Digital Preservation of At-Risk Global Cultural Heritage"]. The goal was the development of software and hardware infrastructure to support the digital preservation and dissemination of 3D cyber-archaeology data, such as point clouds, panoramic images and 3D models.<br> |

| | + | '''Publications:''' |

| | + | * Levy, T.E., Smith, C., Agcaoili, K., Kannan, A., Goren, A., Schulze, J.P., Yago, G., [http://web.eng.ucsd.edu/~jschulze/publications/Levy2020.pdf "At-Risk World Heritage and Virtual Reality Visualization for Cyber- Archaeology: The Mar Saba Test Case"], In Forte, M., Murteira, H. (Eds.): "Digital Cities: Between History and Archaeology", April 2020, pp. 151-171, DOI: 10.1093/oso/9780190498900.003.0008 |

| | + | * Schulze, J.P., Williams, G., Smith, C., Weber, P.P., Levy, T.E., "CAVEkiosk: Cultural Heritage Visualization and Dissemination", a chapter in the book "A Sense of Urgency: Preserving At-Risk Cultural Heritage in the Digital Age", Editors: Lercari, N., Wendrich, W., Porter, B., Burton, M.M., Levy, T.E., accepted by Equinox Publishing for publication in 2021</td> |

| | </tr> | | </tr> |

| | </table> | | </table> |

| | <hr> | | <hr> |

| | | | |

| − | ===[[Object-Oriented Interaction with Large High Resolution Displays]] (Lynn Nguyen 2011-)=== | + | ===[[CalVR]] (Andrew Prudhomme, Philip Weber, Giovanni Aguirre, Jurgen Schulze, since 2010)=== |

| | <table> | | <table> |

| | <tr> | | <tr> |

| − | <td>[[Image:Lynn_selected.jpg]]</td> | + | <td>[[Image:Calvr-logo4-200x75.jpg|250px]]</td> |

| − | <td>Investigate the practicality of using smartphones to interact with large high resolution displays. To accomplish such a | + | <td>CalVR is our virtual reality middleware (a.k.a. VR engine), which we have been developing for our graphics clusters. It runs on anything from a laptop to a large multi-node CAVE, and builds under Linux, Windows and MacOS. More information about how to obtain the code and build it can be found on our [[CalVR | CalVR page for software developers]].<br> |

| − | task, it is not necessary to find the spatial location of the phone relative to the display, rather we can identify the object a user wants to interact with through image recognition. The interaction with the object itself can be done by using the smart-phone as the medium. The feasibility of this concept is investigated by implementing a prototype.</td>

| + | '''Publications:''' |

| | + | * J.P. Schulze, A. Prudhomme, P. Weber, T.A. DeFanti, [http://web.eng.ucsd.edu/~jschulze/publications/Schulze2013.pdf "CalVR: An Advanced Open Source Virtual Reality Software Framework"], In Proceedings of IS&T/SPIE Electronic Imaging, The Engineering Reality of Virtual Reality, San Francisco, CA, February 4, 2013, ISBN 9780819494221</td> |

| | </tr> | | </tr> |

| | </table> | | </table> |

| | <hr> | | <hr> |

| | | | |

| − | ===[[ScreenMultiViewer]] (John Mangan, Phi Hung Nguyen 2010-)===

| + | =<b>[[Past Projects|Older Projects]]</b>= |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:image-missing.jpg]]</td>

| + | |

| − | <td>A display mode within CalVR that allows two users to simultaneously use head trackers within either the StarCAVE or Nexcave, with minimal immersion loss.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[MatEdit]] (Khanh Luc, 2011)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:Screenshot_MaterialEditor_small.jpg]]</td>

| + | |

| − | <td>A graphical user interface for programmers to adjust and preview material properties on an object. Once a programmer determines the proper parameters for the look of his material, he can then generate code to achieve that look</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[Kinect UI for 3D Pacman]] (Tony Lu, 2011)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:Pacmanscreenshot_small.png]]</td>

| + | |

| − | <td>An experimentation with the Kinect to implement a device free, gesture controlled user interface in the StarCAVE to run a 3D Pacman game.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[TelePresence]] (Seth Rotkin, Mabel Zhang, 2010-)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:TelePresenceDualView_thumb.png]]</td>

| + | |

| − | <td>A virtual representation of the Cisco TelePresence telecommunications system. The room includes a full 3D reproduction of an actual TelePresence 3000 room, live streaming video footage, pre-recorded stereo videos, a 3D powerpoint projection zone, and a movable floating 3D model.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[CaveCAD]] (Lelin Zhang 2009-)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:CaveCAD.jpg]]</td>

| + | |

| − | <td>Calit2 researcher Lelin ZHANG provides architect designers with pure immersive 3D experience in virtual reality environment of StarCAVE.

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[GreenLight Blackbox]] (Mabel Zhang, Andrew Prudhomme, Seth Rotkin, Philip Weber, Grant van Horn, Connor Worley, Quan Le, Hesler Rodriguez, 2008-)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:BlackboxThumb0001.png|Sun Blackbox rear view]]</td>

| + | |

| − | <td>Mabel Zhang has been working on 3D modeling tasks at IVL. Her first task was to model the Calit2 building, which she completed as a Calit2 summer intern. We then hired her as a student worker to create a 3D model of the SUN Mobile Data Center which is a core component of the instrument procured by the [http://wiki.greenlight.calit2.net GreenLight project]. Andrew added an on-line connection to the Blackbox at UCSD to display the output of the power modules. Their project was demonstrated at the Supercomputing Conference 2008 in Austin, Texas.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Neuroscience and Architecture (Daniel Rohrlick, Michael Bajorek, Mabel Zhang, Lelin Zhang 2007)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:neuroscience.jpg]]</td>

| + | |

| − | <td>This projects started off as a Calit2 seed funded project to do a pilot study with the Swartz Center for Neuroscience in which a human subject has to find their way to specific locations in the Calit2 building while their brain waves are being scanned by a high resolution EEG. Michael's responsibility was the interface between the StarCAVE and the EEG system, to transfer tracker data and other application parameters to allow for the correlation of EEG data with VR parameters. Daniel created the 3D model of the New Media Arts wing of the building using 3ds Max. Mabel refined the Calit2 building geometry. This project has been receiving funding from HMC.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[Volumetric Blood Flow Rendering]] (Yuri Bazilevs, Jurgen Schulze, Alison Marsden, Greg Long, Han Kim 2011)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:VolumeBloodFlow-small.png]]</td>

| + | |

| − | <td>An extension of the BloodFlow (2009-2010) Project. We attempt to create more useful visualizations of blood flow in a blood vessel, through volume rendering techniques, and tackle challenges resulting from volumetric rendering of large datasets.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | =<b>Inactive Projects</b>= | + | |

| − | | + | |

| − | | + | |

| − | ===[[Meshing and Texturing Point Clouds]] (Robert Pardridge, Vikash Nandkeshwar, James Lue, 2011)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:image-missing.jpg]]</td>

| + | |

| − | <td>These students are using data from the previous PhotosynthVR project to create 3D geometry and textures.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | | + | |

| − | ===Khirbat en-Nahas (Kyle Knabb, Jurgen Schulze, Connor DeFanti, 2008-2010)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:khirbit.jpg]]</td>

| + | |

| − | <td>For the past ten years, a joint University of California, San Diego and Department of Antiquities of Jordan research team led by Professor Tom Levy and Dr. Mohammad Najjar has been investigating the role of mining and metallurgy on social evolution from the Neolithic period (ca. 7500 BC) to medieval Islamic times (ca. 12th century AD). Kyle Knabb has been working with the IVL as a master's student under Professor Thomas Levy from the archaeology department. He created a 3D visualization for the StarCAVE which displays several excavation sites in Jordan, along with artifacts found there, and radio carbon dating sites. In a [[Digital Archaeology|related project]] we acquired stereo photography from the excavation site in Jordan.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[BloodFlow]] (Yuri Bazilevs, Jurgen Schulze, Alison Marsden, Ming-Chen Hsu, Kenneth Benner, Sasha Koruga; 2009-2010)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:VelocityVectorField-small.png]]</td>

| + | |

| − | <td>In this project, we are working on visualizing the blood flow in an artery, as simulated by Professor Bazilev at UCSD. Read the [[Blood Flow Manual]] for usage instructions.<br>Videos and pictures of the visualizations in 2D can be found [http://acsweb.ucsd.edu/~skoruga/bloodflow here], and the corresponding iPhone versions of the videos can be downloaded [[Media:iphone_videos.zip|here]].</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===PanoView360 (Andrew Prudhomme, 2008-2010)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:image-missing.jpg]]</td>

| + | |

| − | <td>In collaboration with Professor Dan Sandin from EVL, Andrew created a COVISE plugin to display photographer Richard Ainsworth's panoramic stereo images in the StarCAVE and the Varrier.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===PhotosynthVR (Sasha Koruga, Haili Wang, Phi Nguyen, Velu Ganapathy; 2009-)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:sungod-small.png]]</td>

| + | |

| − | <td>UCSD Sasha Koruga has created a Photosynth-like system with which he can display a number of photographs in the StarCAVE. The images appear and disappear as the user moves around the photographed object. Read the [[PhotosynthVR Manual]] for usage instructions.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[Mipmapped_Volume_Rendering|Multi-Volume Rendering]] (Han Kim, 2009)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:Volume_rendering.jpg]]</td>

| + | |

| − | <td>The goal of multi-volume rendering is to visualize multiple volume data sets. Each volume has three or more channels.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===How Much Information (Andrew Prudhomme, 2008-2009)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:hmi.jpg]]</td>

| + | |

| − | <td>In this project we visualize the data from various collaborating companies which provide us with data stored on harddisks or data transferred over networks. In the first stage, Andrew created an application which can display the directory structures of 70,000 harddisk drives of Microsoft employees, sampled over the course of five years. The visualization uses an interactive hyperbolic 3D graph to visualize the directory trees and to compare different users' trees, and it uses various novel data display methods like wheel graphs to display file sizes, etc. More information about this project can be found at [http://hmi.ucsd.edu/].</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[Hotspot Mitigation]] (Jordan Rhee, 2008-2009)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:hotspot.png]]</td>

| + | |

| − | <td>ECE Undergraduate student Jordan Rhee has been working on algorithms for hotspot mitigation in projected virtual reality environments such as the StarCAVE. His COVISE plugin is based on a GLSL shader which performs the mitigation in real-time, minimizing the performance impact on the application.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===ATLAS in Parallel (Ruth West, Daniel Tenedorio, Todd Margolis, 2008-2009)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:image-missing.jpg]]</td>

| + | |

| − | <td>UCSD graduate Daniel Tenedorio is working on parallelizing the simulation algorithm of the Atlas in Silico art piece, supported by an NSF funded SGER grant. Daniel's goal is to be able to support the entire CAMERA data set, consisting of 17 million data points (open reading frames). Daniel is going to use the CUDA architecture of NVidia's graphics cards to achieve a considerable performance increase.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Animated Point Clouds (Daniel Tenedorio, Rachel Chu, Sasha Koruga, 2008)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:rachel.jpg]]</td>

| + | |

| − | <td>UCSD students Daniel Tenedorio and Rachel Chu collaborated as PRIME students between NCHC in Taiwan and Osaka University. They worked on a 3D teleconferencing project, for which they used data from a 3D video scanner at Osaka University and streamed it across the network between NCHC and Osaka. Their VR application now runs in the StarCAVE at UCSD. Sasha Koruga has been continuing this project in the winter quarter 2009.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===6DOF Tracking with Wii Remotes (Sage Browning, Philip Weber, 2008)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:image-missing.jpg]]</td>

| + | |

| − | <td>Past research at Calit2 has developed an affordable means of creating large high-resolution computer displays, the OptiPortals. Contemporary input devices for tiled display walls, which go beyond the capabilities a desktop mouse can offer, are either very expensive, or less than satisfactory. Some controllers can cost $40,000, which defeats the benefits of the relatively inexpensive OptiPortal. Alternately, currently available inexpensive methods leave much to be desired: the same image output on the tiled display wall is also shown on a standard sized control monitor using a standard mouse as input, leaving the user without a means of direct interface with the OptiPortal. In order to create an improved interface for the OptiPortal, research must be done in the area of 3D location tracking that is accurate to within a few millimeters, yet maintains a reasonable cost of production and an intuitive design. The focus of this project is to use multiple Wii Remotes for six degree of freedom tracking. We are going to integrate this new input device scheme into the COVISE software, so that existing software applications can directly benefit from this research.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Spatialized Sound (Toshiro Yamada, Suketu Kamdar, 2008)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:image-missing.jpg]]</td>

| + | |

| − | <td>Peter Otto's group created a 3D sound system which we can control from the StarCAVE by positioning a sound source in a 3D environment. In our demonstration, the sound source is represented as a cone and can be placed in the pre-function area on the 1st floor of the virtual Calit2 building.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Video in Virtual Environments (Han Kim, 2008-2010)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:mipmap-video.jpg]]</td>

| + | |

| − | <td>Computer Science graduate student Han Kim has developed an efficient "mip-map" algorithm that "shrinks" high-resolution video content so that it can be played interactively in VEs. He has also created several optimization solutions for sustaining a stable video playback frame rate when the video is projected onto non-rectangular VE screens.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | | + | |

| − | ===[[LOOKING|LOOING/ORION]] (Philip Weber, 2007-2009)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:orion.jpg]]</td>

| + | |

| − | <td>Under the direction of Matt Arrott, Philip created an interactive 3D exploration tool for simulated ocean currents in Monterey Bay. The software uses OssimPlanet to display terrain and bathymetry of the area to give context to streamlines and iso-surfaces of the vector field representing the ocean currents.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[OssimPlanet]] (Philip Weber, Jurgen Schulze, 2007)=== | + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:ossimplanet.jpg]]</td>

| + | |

| − | <td>In this project we ported the open source OssimPlanet library to COVISE, so that it can run in our VR environments, including the Varrier tiled display wall and the StarCAVE.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[CineGrid]] (Leo Liu, 2007)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:fountain-code.jpg]]</td>

| + | |

| − | <td>Computer science graduate student Leo Liu has been working on new algorithms for long distance 4k video streaming, as well as 4k movie data management. He has been working in close collaboration with the iRods group at the San Diego Supercomputer Center (SDSC) and has helped create iRods servers for CineGrid content in San Diego, Amsterdam, and Keio University in Japan.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[Virtual Calit2 Building]] (Daniel Rohrlick, Mabel Zhang, 2006-2009)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:calit2-building.jpg]]</td>

| + | |

| − | <td>The goal the Virtual Calit2 Building is to provide an accurate and accessible virtual replica of the Calit2 building that can be used by any researcher or scientist to conduct projects or tests that require the need for a detailed architectural layout.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[Interaction with Multi-Spectral Images]] (Philip Weber, Praveen Subramani, Andrew Prudhomme, 2006-2009)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:adoration.jpg]]</td>

| + | |

| − | <td>In this project, a versatile high resolution image viewer was created which allows loading multi-gigabyte size image files to display in the StarCAVE and on tiled display walls. Images can consist of multiple layers, like Maurizio Seracini's multi-spectral painting scans. The "Walking into a DaVinci Masterpiece" demonstration in the auditiorium uses this COVISE module. Other images we can display with this technique are the Golden Gate Bridge, an USGS map of La Jolla, NCMIR's mouse cerebellum, the Brain Observatory's monkey brain, and cancer cells from Andy Kummel's lab.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Finite Elements Simulation (Fabian Gerold, 2008-2009)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:fem-vis.jpg]]</td>

| + | |

| − | <td>In this project, Fabian created a simulation module with attached visualization capability. The simulation calculates the stress on a 3D structure which the user can design directly in the StarCAVE. Then the user can run a pre-recorded earthquake on the structure to see where and how strong the forces are on the various elements of the structure.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Palazzo Vecchio (Philip Weber, 2008)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:vecchio.jpg]]</td>

| + | |

| − | <td>Philip Weber created the PointModel viewer, which renders LIDAR point data sets like Palazzo Vecchio in the StarCAVE. Philip implemented a hierarchical rendering algorithm which allows rendering two million points in real-time. This application also supports other point data sets like UCSD's shake table at the Englekirk site.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Virtual Architectural Walkthroughs (Edward Kezeli, 2008)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:image-missing.jpg]]</td>

| + | |

| − | <td>We have 3D models of several buildings on and off UCSD's campus which we can bring up in the StarCAVE to view them life size. We got the Structural Engineering and Visual Arts building from Professor Kuester. The architectural firm HMC made available to use the following CAD models: the Rady School of Management at UCSD, HMC's offices in Los Angeles, and a section of the library at San Francisco State University. In collaboration with HMC, Edward created an art piece showing a gigantic Moebius torus floating over Los Angeles.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===NASA (Andrew Prudhomme, 2008)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:image-missing.jpg]]</td>

| + | |

| − | <td>In collaboration with scientists from NASA, we have created several data sets which can be viewed in the StarCAVE: a 3D model of a site Mars rover Spirit took a picture of, a short time after its right front wheel had jammed. Other demonstrations are a 3D model of the International Space Station, a 3D model of a Mars rover, as well as several 2D and 3D surround image panoramas of sites on Mars.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Digital Lightbox (Philip Weber, 2007-2008)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:digital-lightbox.jpg]]</td>

| + | |

| − | <td>In collaboration with Professor Jacopo Annese's Brain Observatory, Philip created an application for Jacopo's 5x3 tiled display wall which allows displaying up to 90 different cross sections of monkey brains at once. Selected scans exist at super high resolution of hundreds of millions of pixels and can be magnified across the whole wall.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Research Intelligence Portal (Alex Zavodny, Andrew Prudhomme, 2007-2008)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:image-missing.jpg]]</td>

| + | |

| − | <td>Alex and Andrew created the Research Intelligence kiosk application under a Calit2 grant. They use a visualization technique which is based on smooth transitions between different states of the displayed 2D and 3D geometry. This demonstration runs on a 2x2 tiled display wall on the 5th floor.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===New San Francisco Bay Bridge (Andre Barbosa, 2007-2008)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:baybridge.jpg]]</td>

| + | |

| − | <td>Structural engineering graduate student Andre Barbosa developed a virtual reality application for the StarCAVE. The software allows the user to view and fly through a 3D model of a large part of the new San Francisco bay bridge. His application uses a layered approach to reduce the number of concurrently displayed polygons in order to achieve real-time frame rates. The original data set was created by CalTrans with Bentley's Microstation CAD software. One of Andre's accomplishments was to convert the original, highly detailed CAD data set to a 3D model that could be rendered at interactive frame rates in the StarCAVE.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Birch Aquarium (Daniel Rohrlick, 2007-2008)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:image-missing.jpg]]</td>

| + | |

| − | <td>For a project funded by the Birch Aquarium Daniel created a 3D underwater scene with a remote controllable submarine, showing the ocean floor around a hydrothermic vent. This project incorporates sound effects, created in collaboration with Peter Otto's group.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[CAMERA Meta-Data Visualization]] (Sara Richardson, Andrew Prudhomme, 2007-2008)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:camera-metadata.png]]</td>

| + | |

| − | <td>This project started with Sara's Calit2 Undergraduate Scholarship and was later continued by Andrew. The visualization tool they created can display the meta-data of the CAMERA sample sites, and real-time usage data from the CAMERA project's BLAST server on a world map.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Depth of Field (Karen Lin, 2007)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:karen.jpg]]</td>

| + | |

| − | <td>Karen Lin wrote her computer science master's thesis under the direction of Professor Matthias Zwicker and Jurgen Schulze. She created a software application which can introduce depth of field into a scene rendered using an image based technique.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[Rincon|HD Camera Array]] (Alex Zavodny, Andrew Prudhomme, 2007)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:hd-camera-array.jpg]]</td>

| + | |

| − | <td>In this Rincon funded project, we are able to stream video from the two Ethernet HD cameras and display the warped videos simultaneously on the receiving end. The user can then interactively change the bitrate of the stream, change the focal depth of the virtual camera, and manually align the images. The tiled display wall is accessed through a SAGE module.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[http://www.atlasinsilico.net Atlas in Silico for Varrier] (Ruth West, Iman Mostafavi, Todd Margolis, 2007)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:atlas-in-silico.jpg]]</td>

| + | |

| − | <td>As genomics digitizes life, the organism and self are initially lost to data, but ultimately a broader meaning is re-attained. ATLAS in silico blends art, science, dynamic media and emerging technologies to reflect upon humanity's long-standing quest for an understanding of the nature, origins, and unity of life. This multimedia art installation was displayed at SIGGRAPH 2007.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[http://www.noahwf.com/screen/ Screen] (Noah Wardrip-Fruin, 2007)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:screen.jpg]]</td>

| + | |

| − | <td>Under the guidance of [http://communication.ucsd.edu/people/f_wardrip_fruin.html Noah Wardrip-Fruin] and [http://www.calit2.net/~jschulze/ Jurgen Schulze], Ava Pierce, David Coughlan, Jeffrey Kuramoto, and Stephen Boyd are adapting the multimedia art installation [http://www.noahwf.com/screen/index.html Screen] from the four-wall cave system at Brown University to the StarCAVE. This piece was displayed at SIGGRAPH 2007 and was the first virtual reality application to demoed in the StarCAVE. It was also displayed at the Beall Center at UC Irvine in the fall of 2007. For this purpose, it was ported to a single stereo wall display.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Children's Hospital (Jurgen Schulze, 2007)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:newton.jpg]]</td>

| + | |

| − | <td>From our collaboration with Dr. Peter Newton from San Diego's Children's Hospital we have a few computer tomography (CT) data sets of childerens' upper bodies, showing irregularities of their spines.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[Super Browser]] (Vinh Huynh, Andrew Prudhomme, 2006)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:image-missing.jpg]]</td>

| + | |

| − | <td>In this project, a gnuhtml based web browser was developed, which can display various types of HTML tags, as well as very large images on web pages. There is no limit to the spatial extent of the web page, because all content is displayed with vector graphics, rather than on a pixel grid.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Cell Structures (Iman Mostafavi, 2006)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:mitochondria.jpg]]</td>

| + | |

| − | <td>NCMIR funded graduate student Iman Mostafavi created an interactive visualization tool for the visualization of mitchondria. The user can click on the various components of a mitochondrion and take them apart to understand what it is composited of.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Terashake Volume Visualization (Jurgen Schulze, 2006)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:sage-vox-thumb.jpg]]</td>

| + | |

| − | <td>As part of the NSF funded [http://www.optiputer.net/ Optiputer] project, Jurgen visualized part of the 4.5 terabyte [http://visservices.sdsc.edu/projects/scec/terashake/ TeraShake] earthquake data set on a the 100 megapixel LambdaVision display at Calit2. For this project, he integrated his volume visualization tool VOX into [http://www.evl.uic.edu/ EVL]'s [http://www.evl.uic.edu/cavern/sage/ SAGE].<td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[[Protein Visualization]] (Philip Weber, Andrew Prudhomme, Krishna Subramanian, Sendhil Panchadsaram, 2005-2009)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:topsan.jpg]]</td>

| + | |

| − | <td>A VR application to view protein structures from UCSD Professor [http://www.sdsc.edu/~bourne/ Philip Bourne's] [http://www.rcsb.org/pdb/ Protein Data Bank (PDB)]. The popular molecular biology toolkit PyMol is used to create the 3D models of the PDB files. Our application also supports protein alignment, an aminoacid sequence viewer, integration of TOPSAN annotations, as well as a variety of visualization modes. Among the users of this application are: UC Riverside (Peter Atkinson), UCSD Pharmacy (Zoran Radic), Scripps Research Institute (James Fee/Jon Huntoon).</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Earthquake Visualization (Jurgen Schulze, 2005)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:earthquakes-thumb.jpg]]</td>

| + | |

| − | <td>Along with Debi Kilb from the [http://siovizcenter.ucsd.edu/ Scripps Institution of Oceanography (SIO)] we visualized 3D earthquake locations on a world-wide scale.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===Geoscience (Jurgen Schulze, 2005)===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:geoscience-thumb.jpg]]</td>

| + | |

| − | <td>In collaboration with SIO's research scientist Graham Kent, we created the 3D reconstruction of an area of the floor of the Pacific Ocean. Sonar scans allow us to see the rock formations under the sea floor. The data we used in this project is typical for oil and gas companies looking for oil reservoirs under ground.</td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |

| − | | + | |

| − | ===[http://www.calit2.net/~jschulze/projects/vox/ VOX and Virvo (Jurgen Schulze, 1999)]===

| + | |

| − | <table>

| + | |

| − | <tr>

| + | |

| − | <td>[[Image:deskvox.jpg]]</td>

| + | |

| − | <td>Ongoing development of real-time volume rendering algorithms for interactive display at the desktop (DeskVOX) and in virtual environments (CaveVOX). Virvo is name for the GUI independent, OpenGL based volume rendering library which both DeskVOX and CaveVOX use.<td>

| + | |

| − | </tr>

| + | |

| − | </table>

| + | |

| − | <hr>

| + | |