Difference between revisions of "Project3W21"

| Line 20: | Line 20: | ||

The goal of this assignment is to create a 3D application which can help with the design of a VR classroom such as UCSD's in the CSE building. | The goal of this assignment is to create a 3D application which can help with the design of a VR classroom such as UCSD's in the CSE building. | ||

| − | This project requires the use of a VR headset with two 3D-tracked controllers, such as the Oculus Quest 2, Rift (S), Vive, etc. | + | This project requires the use of a VR headset with two 3D-tracked controllers, such as the Oculus Quest 2, Rift (S), Vive, etc. Your software is not allowed to use keyboard or mouse input. |

We will give an introduction to this project in discussion on Monday, February 8th at 4pm. | We will give an introduction to this project in discussion on Monday, February 8th at 4pm. | ||

| Line 26: | Line 26: | ||

==Getting Unity ready for VR== | ==Getting Unity ready for VR== | ||

| − | To enable Oculus VR support in Unity, use the [https://assetstore.unity.com/packages/tools/integration/oculus-integration-82022 Oculus Integration package] from the Unity Asset Store. For SteamVR-compatible devices, there is a [https://assetstore.unity.com/packages/tools/integration/steamvr-plugin-32647 separate Unity asset]. For other VR systems there should also be assets available. | + | To enable Oculus VR support in Unity, use the [https://assetstore.unity.com/packages/tools/integration/oculus-integration-82022 Oculus Integration package] from the Unity Asset Store. For SteamVR-compatible devices, there is a [https://assetstore.unity.com/packages/tools/integration/steamvr-plugin-32647 separate Unity asset]. For other VR systems there should also be assets available. Contact us if you can't make your headset work. |

| − | + | ||

| − | + | ||

==Selection and Manipulation (100 Points)== | ==Selection and Manipulation (100 Points)== | ||

| − | + | Write a 3D application which puts the user in a 3D model of the VR lab at 1:1 scale (i.e., life size). [[Media:Vrlab-fbx.zip | This ZIP file]] contains the room, as well as the furniture needed for this project. | |

| − | + | ||

| − | + | ||

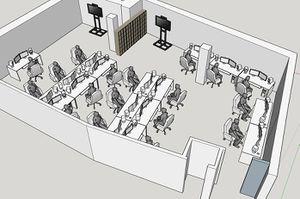

[[Image:vr-lab.jpg | 300px]] | [[Image:vr-lab.jpg | 300px]] | ||

| − | + | * Initially, place the user roughly in the center of the empty lab room (without any of the furniture in it). | |

| + | |||

| + | * Enable collision detection and physics in Unity and give the furniture as well as the room proper physics properties as well as colliders. | ||

| + | |||

| + | * Implement the following two 3D selection and manipulation methods: ray-casting and virtual hand interaction. | ||

| + | |||

| + | * Each method needs to allow the user to create a piece of furniture, place it in the desired location, and rotate it to the desired orientation. | ||

| + | |||

| + | Those are the high-level goals. In detail you will need to deal with the following: | ||

| − | + | * For ray-casting: | |

| + | ** Display a line starting at your dominant hand's controller. The ray should point forward from the controller, much like a laser pointer would. The ray should be long enough to reach all of the walls of the lab. | ||

| + | ** To select one of the desks or chairs in the room: find out which piece of furniture is intersected by the ray and highlight it. Update the highlight when the ray intersects a different piece of furniture. If the ray intersects multiple objects, highlight the object that is closest to the controller. Make sure you ignore the user's avatar for the intersection test. You can choose any highlighting method you would like, such as a wireframe box around the collider, a halo, change the object's color, make it pulsate, etc. | ||

| + | ** Allow manipulation of the highlighted object when the user pulls the trigger button of the dominant hand's controller: move the object with the ray until the trigger button is released. The motion should resemble that of a marshmallow that you hold on a stick over a campfire. When the trigger button is released, the physics engine should take over and make the object fall down just like when initially spawned. | ||

| − | + | * Allow the user to spawn desks and chairs: when the user presses the A (or X) button on the dominant hand's controller, a chair is created at about 2 meters from the user's hand on the ray. When the user presses B (or Y), a desk gets created. While the user holds down the spawn button, the desk/chair remains on the ray so that it can be positioned and oriented as desired. Once the button is released, the desk/chair falls down pulled by gravity and comes to rest on the floor or on another piece of furniture. | |

| − | |||

| − | |||

| − | |||

==Extra Credit (10 Points)== | ==Extra Credit (10 Points)== | ||

| Line 53: | Line 57: | ||

There are two options for extra credit. | There are two options for extra credit. | ||

| − | 1. Improve the spawning method: create a menu that shows up when you push and hold the trigger button on your non-dominant hand's controller. Show not just the chair and desk as options but also the other pieces of furniture in the ZIP file. Allow spawning a piece of furniture of your choice by clicking on it with the trigger button on your dominant hand's controller while the ray intersects the object you want to spawn. | + | 1. Improve the spawning method: create a menu that shows up when you push and hold the trigger button on your non-dominant hand's controller. Show not just the chair and desk as options but also the other pieces of furniture in the ZIP file. Allow spawning a piece of furniture of your choice by clicking on it with the trigger button on your dominant hand's controller while the ray intersects the object you want to spawn. |

| − | 2. Add an interaction mode for two-handed scaling of the entire world around you. By clicking and holding down both | + | 2. Add an interaction mode for two-handed scaling of the entire world around you. By clicking and holding down both grab buttons, the user can gradually scale up or down the entire room with all the furniture to work on a global scale (by scaling down) or to work more accurately (by scaling up). The room should change its scale proportionally to the distance between the controllers while the grab buttons are held. Add a function to reset to the initial 1:1 scale by pulling both grab buttons simultaneously for less than about half a second. (On Oculus controllers the grab buttons are those under the middle fingers, on Vive controllers they are the ones on the sides of the controllers.) |

Revision as of 19:12, 7 February 2021

UNDER CONSTRUCTION

Contents |

Homework Assignment 3: VR Classroom Design Tool

For this assignment you can obtain 100 points, plus up to 10 points of extra credit.

The goal of this assignment is to create a 3D application which can help with the design of a VR classroom such as UCSD's in the CSE building.

This project requires the use of a VR headset with two 3D-tracked controllers, such as the Oculus Quest 2, Rift (S), Vive, etc. Your software is not allowed to use keyboard or mouse input.

We will give an introduction to this project in discussion on Monday, February 8th at 4pm.

Getting Unity ready for VR

To enable Oculus VR support in Unity, use the Oculus Integration package from the Unity Asset Store. For SteamVR-compatible devices, there is a separate Unity asset. For other VR systems there should also be assets available. Contact us if you can't make your headset work.

Selection and Manipulation (100 Points)

Write a 3D application which puts the user in a 3D model of the VR lab at 1:1 scale (i.e., life size). This ZIP file contains the room, as well as the furniture needed for this project.

- Initially, place the user roughly in the center of the empty lab room (without any of the furniture in it).

- Enable collision detection and physics in Unity and give the furniture as well as the room proper physics properties as well as colliders.

- Implement the following two 3D selection and manipulation methods: ray-casting and virtual hand interaction.

- Each method needs to allow the user to create a piece of furniture, place it in the desired location, and rotate it to the desired orientation.

Those are the high-level goals. In detail you will need to deal with the following:

- For ray-casting:

- Display a line starting at your dominant hand's controller. The ray should point forward from the controller, much like a laser pointer would. The ray should be long enough to reach all of the walls of the lab.

- To select one of the desks or chairs in the room: find out which piece of furniture is intersected by the ray and highlight it. Update the highlight when the ray intersects a different piece of furniture. If the ray intersects multiple objects, highlight the object that is closest to the controller. Make sure you ignore the user's avatar for the intersection test. You can choose any highlighting method you would like, such as a wireframe box around the collider, a halo, change the object's color, make it pulsate, etc.

- Allow manipulation of the highlighted object when the user pulls the trigger button of the dominant hand's controller: move the object with the ray until the trigger button is released. The motion should resemble that of a marshmallow that you hold on a stick over a campfire. When the trigger button is released, the physics engine should take over and make the object fall down just like when initially spawned.

- Allow the user to spawn desks and chairs: when the user presses the A (or X) button on the dominant hand's controller, a chair is created at about 2 meters from the user's hand on the ray. When the user presses B (or Y), a desk gets created. While the user holds down the spawn button, the desk/chair remains on the ray so that it can be positioned and oriented as desired. Once the button is released, the desk/chair falls down pulled by gravity and comes to rest on the floor or on another piece of furniture.

Extra Credit (10 Points)

There are two options for extra credit.

1. Improve the spawning method: create a menu that shows up when you push and hold the trigger button on your non-dominant hand's controller. Show not just the chair and desk as options but also the other pieces of furniture in the ZIP file. Allow spawning a piece of furniture of your choice by clicking on it with the trigger button on your dominant hand's controller while the ray intersects the object you want to spawn.

2. Add an interaction mode for two-handed scaling of the entire world around you. By clicking and holding down both grab buttons, the user can gradually scale up or down the entire room with all the furniture to work on a global scale (by scaling down) or to work more accurately (by scaling up). The room should change its scale proportionally to the distance between the controllers while the grab buttons are held. Add a function to reset to the initial 1:1 scale by pulling both grab buttons simultaneously for less than about half a second. (On Oculus controllers the grab buttons are those under the middle fingers, on Vive controllers they are the ones on the sides of the controllers.)