Project2S17

Contents |

Levels of Immersion

In this project we are going to explore different levels of immersion we can create for the Oculus Rift. Like in project 1, we're going to use the Oculus SDK, OpenGL and C++.

Starter Code

We recommend starting either with your project 1 code, or going back to the Minimal Example you started your project 1 with. It's very compact but yet uses most of the API functions you will need.

Here is the minimal example .cpp file with comments on where the hooks are for the various functions required for this homework project. Note that this file has a main() function, so that it can be built as a console application, and will start up with a console window into which debug messages can be printed. In Visual Studio, you need to enable console mode in the project's properties window with Configuration Properties -> Linker -> System -> SubSystem -> Console.

Project Description (100 Points)

You need to do the following things:

- Modify the minimal example (in which many cubes are rendered) to render a single cube with this calibration image on all its faces, across the entire width and height of the respective face. The image needs to be right side up on the vertical faces. On top and bottom there also needs to be an image but the orientation is your choice - as long as the image isn't mirrored. The cube should be 20cm wide and its closest face should be about 30cm in front of the user. Here is source code to load the PPM file into memory so that you can apply it as a texture. Sample code for texture loading can be found in the OpenVR OpenGL example's function SetupTexturemaps(). (20 points)

- Cycle between the following four modes with the 'A' button: 3D stereo, mono (both eyes see identical view), left eye only (right eye dark), right eye only (left eye dark). Cycling means that repeated pressing of the 'A' button will change viewing modes from 3D stereo to mono to left only to right only and back to 3D stereo. Regardless of which mode is active, head tracking should work correctly, depending on which mode it's in as described below. (15 points)

- Cycle between head tracking modes with the 'B' button: no tracking, orientation only, position only, both. When you turn off one or both of the tracking types, freeze the last measured values (rather than defaulting to hard coded values) so that the image won't jump when you freeze tracking. (15 points)

- Gradually vary the interocular distance (IOD) with the right thumb stick left/right. For this you have to learn about how the Oculus SDK specifies the IOD. They don't just use one number for the separation distance, but each eye has a 3D offset from a central point instead. Find out what these offsets are in the default case, and modify only their horizontal offsets to change the IOD. Support an IOD range from zero to 100cm. (15 points)

- Gradually vary the physical size of the cube with the left thumb stick left/right. This means changing the width of the cube from 30cm to smaller or larger values. Support a range from 1cm to 100cm. Compare the effect with changing the IOD (observe and tell us about it during grading). (15 points)

- Render this stereo panorama image around the user as a sky box. The trick here is to render different textures on the sky box for each eye, which is necessary to achieve a 3D effect. Cycle between calibration cube, stereo panorama, and both displayed simultaneously with the 'X' button. (20 points)

Notes on Panorama Rendering

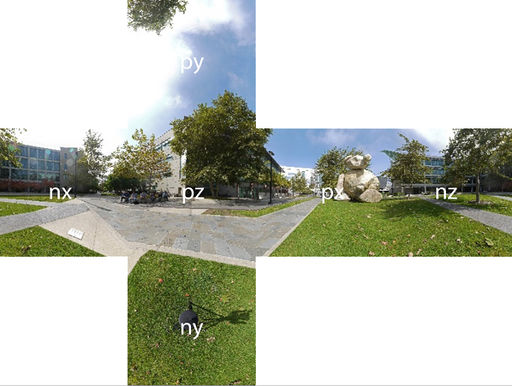

There are six cube map images in PPM format in the ZIP file. Each is 2k x 2k pixels in size. The files are named nx, ny and nz for the negative x, y and z axis images. The positive axis files are named px, py and pz. Here is a downsized picture of the panorama image:

And this is how the cube map faces are labeled:

The panorama was shot with camera lenses parallel to one another, so the resulting cube maps will need to be separated by a human eye distance when rendered, i.e., their physical placement needs to be horizontally offset from each other for each eye. Support all of the functions you implemented for the calibration cube also for the sky box (A, B and X buttons, both thumb sticks).

Make the sky box very large - at least 20 meters wide, and centered around the user's head tracking location.

Extra Credit (up to 10 points)

There are four options for extra credit. Note that the options above aren't cumulative. You can get points for only one of them.

- Take two photos at an eye distance apart (about 65mm), and show each of them in one eye of the Rift without tracking. You should see a stereo image. Make it an alternate option to the Bear image when the 'X' button is pressed. (2 points)

- Create your own monoscopic sky box: borrow the Samsung Gear 360 camera from the media lab, or use your cell phone's panorama function to capture a 360 degree panorama picture. Process it into cube maps - this on-line conversion tool can do that for you. Texture the sky box with this texture. Make it an alternate option to the Bear image when the 'X' button is pressed. (5 points)

- Monoscopic panoramic video: Download one of the 360 degree movies from https://www.videoblocks.com/videos/footage/360-files. Display it as a video texture in the Rift. For that you'll need to extract the image frames from the video, for instance with [www.ffmpeg.com FFMPEG]. Then you need to create a spherical triangle mesh with texture coordinates. Then you render each frame onto the inside of the sphere. Finally, you need to play back the video at the frame rate it was shot at, regardless of the frame rate the Rift renders at (usually 90 fps). Auto-repeat the movie once it ends. (8 points)

- Stereoscopic panoramic video: Similar to Option 3, but now in stereo. Download one of the movies from http://www.rmit3dv.com. There will be a file for the left and one for the right eye. Using the same approach as in Option 3, but for each eye separately, process the video and play it back in the Rift with head tracking so that you can look around the video as it's playing. (10 points)