Discussion2F16

Contents |

Overview

For those who couldn't make it to discussion today, here's a recap of what went on.

The discussion slides for today can be viewed here

The Rasterizer

For part 4 of the first homework assignment, you will need to do what OpenGL normally does for you - convert all of your objects' local 3D coordinates into window coordinates, so that you can produce a 2D image that can be viewed.

In order to achieve this, you will need to use the following formula:

Let's break each of the components down here, starting from right to left.

Object Space

The original vertex p starts in object space. This is the coordinate system that is inherent to our object, in this case the vertex.

World Space

World space is the space we are in after our transformations have been applied to the object. This allows us to have multiple objects coexist, and share the same world. This is usually written as M for model.

By this point we actually already know how to do world space transformations! We've already done this in the previous parts of homework 1. When we were transforming—translating, scaling, orbiting—the cube, we were already changing from object space to world space. If you're not done with the transformation portion of the homework yet, look at the cube code below:

void Cube::spin(float deg)

{

this->angle += deg;

if (this->angle > 360.0f || this->angle < -360.0f) this->angle = 0.0f;

// This creates the matrix to rotate the cube

this->toWorld = glm::rotate(glm::mat4(1.0f), this->angle / 180.0f * glm::pi<float>(), glm::vec3(0.0f, 1.0f, 0.0f));

}

Notice how cube's spin—which you've all seen work— simply changes one member variable this->toWorld at the end? The toWorld matrix is what we call our model matrix, and it is what takes us from object space to world space(hence the name toWorld).

However, there is a problem, that we've purposefully left in, with how the spin method is modifying this->toWorld here. Did you spot it when you were working on the parts before part 4 of homework 1?

Camera Space

Camera Space describes how the world looks in relation to the camera, or said differently the world with the camera as the reference point. This is usually written as C for Camera. An important point to note is that we actually require the inverse of C.

Why do we need the inverse? Good question! Think about moving the camera in the positive direction by 20. If we think about that camera as our reference point, to the camera it would seem that the other objects of the world are moving in the negative direction by 20, or in other words, the inverse direction of the camera's translation. Remember that we're applying these transformations to p, our vertex, which is an object of the world. Hence, we need to apply the inverse transformation of the camera in our rasterization equation.

So how do we create this matrix? Well, lets first see how we did it in OpenGL. If you look at Window.cpp's Window::resize_callback you'll find the line:

// Move camera back 20 units so that it looks at the origin (or else it's in the origin) glTranslatef(0, 0, -20);

This is exactly the example I gave above. glTranslatef moves the objects of the world. So now we can imagine that our camera is actually in (0, 0, 20). We call this vector e for eye. The direction that the camera is facing is called d. Usually this will be set to the origin (0, 0, 0). Finally, the camera needs to know where up is, to complete the three basis vectors of the space. This is in most cases a normal pointing towards positive Y: (0, 1, 0). Using these three vectors, we can actually consult the convenient glm::lookAt function. You can use this function like such:

glm::mat4 C_inverse = glm::lookAt(e, d, up);

Consult the lecture if you want to know what creating this matrix manually looks like. It will be important to know!

Projection Space

Projection space is what the world looks like after we've applied some projection that warps the world into something closer to our human visual system. There are multiple possibilities for the projections we can use, but we're going to stick with perspective projection for most of 3d graphics in this class. Perspective projection makes our world look warped such that closer objects seem larger, and further objects seem smaller.

So how is the perspective transformation constructed? Let's again look at how OpenGL does it. Look at Window.cpp's Window::resize_callback and you'll find the line:

// Set the perspective of the projection viewing frustum gluPerspective(60.0, (double)width / (double)height, 1.0, 1000.0);

This sets the FOV(field of view) to 60.0 degrees, aspect ratio to width / height, near plane to 1.0, far plane to 1000.0. Much like the inverse camera matrix, glm provides a convenient function for us to create an equivalent matrix:

glm::mat4 P = glm::glm::perspective(glm::radians(60.0f), (float) width / (float) height, 1.0f, 1000.0f);

Consult the lecture if you want to know what creating this matrix manually looks like. It will be important to know!

Image Space

Finally, we're at the matrix that takes a 3d point and gives us 2d pixel coordinates! Almost there.

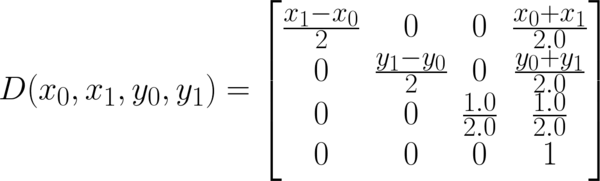

This is the one matrix that we have that glm won't conveniently create for us. So we'll have to directly create ourselves using the following formula:

The end result of multiplying this is the final p'.

However, this is not the end, as we need to normalize the x' and y' to find the actual pixel coordinates. Therefore, our pixel coordinates will be (x'/w', y'/w').

General announcements

Please take note that the project must be demoed in person on Friday 10/07 between 2-4PM in either B260 or B270. You must also submit a zipped package containing your source code (*.cpp and *.h files only) onto TritonEd before 2PM that same day.

If you are unable to make it to the labs during grading time, please send the professor an email as soon as possible to explain why you cannot go, afterwards you will be approved to be graded during any of the course staff's office hours the following week.

During the late grading session, if you demo using unmodified code pulled from TritonEd that was submitted prior to the deadline, then you will not get a late grading penalty. If you use code that was modified or submitted after the deadline, then you will get a late grading penalty.