Difference between revisions of "Projects"

From Immersive Visualization Lab Wiki

(→Active Projects) |

|||

| (128 intermediate revisions by 7 users not shown) | |||

| Line 1: | Line 1: | ||

| − | =<b> | + | =<b>Most Recent Projects</b>= |

| − | = | + | ===Immersive Visualization Center (Jurgen Schulze, 2020-2021)=== |

| − | + | ||

| − | = | + | |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:IVC-Color-Logo-01.png|250px]]</td> |

| − | <td></td> | + | <td>Dr. Schulze founded the [http://ivc.ucsd.edu Immersive Visualization Center (IVC)] to bring together immersive visualization researchers and students with researchers from research domains which have a need for immersive visualization using virtual reality or augmented reality technologies. The IVC is located at UCSD's [https://qi.ucsd.edu/ Qualcomm Institute]. |

| + | </td> | ||

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | === | + | ===3D Medical Imaging Pilot (Larry Smarr, Jurgen Schulze, 2018-2021)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:3dmip.jpg|250px]]</td> |

| − | <td></td> | + | <td>In this [https://ucsdnews.ucsd.edu/feature/helmsley-charitable-trust-grants-uc-san-diego-4.7m-to-study-crohns-disease $1.7M collaborative grant with Dr. Smarr] we have been working on developing software tools to support surgical planning, training and patient education for Crohn's disease.<br> |

| + | '''Publications:''' | ||

| + | * Lucknavalai, K., Schulze, J.P., [http://web.eng.ucsd.edu/~jschulze/publications/Lucknavalai2020.pdf "Real-Time Contrast Enhancement for 3D Medical Images using Histogram Equalization"], In Proceedings of the International Symposium on Visual Computing (ISVC 2020), San Diego, CA, Oct 5, 2020 | ||

| + | * Zhang, M., Schulze, J.P., [http://web.eng.ucsd.edu/~jschulze/publications/Zhang2021.pdf "Server-Aided 3D DICOM Image Stack Viewer for Android Devices"], In Proceedings of IS&T The Engineering Reality of Virtual Reality, San Francisco, CA, January 21, 2021 | ||

| + | '''Videos:''' | ||

| + | * [https://drive.google.com/file/d/1pi1veISuSlj00y82LfuPEQJ1B8yXWkGi/view?usp=sharing VR Application for Oculus Rift S] | ||

| + | * [https://drive.google.com/file/d/1MH2L6yc5Un1mo4t37PRUTXq8ptFIbQf3/view?usp=sharing Android companion app] | ||

| + | </td> | ||

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | ===[ | + | ===[https://www.darpa.mil/program/explainable-artificial-intelligence XAI] (Jurgen Schulze, 2017-2021)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:xai-icon.jpg|250px]]</td> |

| − | <td> | + | <td>The effectiveness of AI systems is limited by the machine’s current inability to explain their decisions and actions to human users. The Department of Defense (DoD) is facing challenges that demand more intelligent, autonomous, and symbiotic systems. The Explainable AI (XAI) program aims to '''create a suite of machine learning techniques that produce more explainable models''', while maintaining a high level of learning performance (prediction accuracy); and enable human users to understand, appropriately trust, and effectively manage the emerging generation of artificially intelligent partners. This project is a collaboration with the [https://www.sri.com/ Stanford Research Institute (SRI) Princeton, NJ]. Our responsibility is the development of the web interface, as well as in-person user studies, for which we recruited over 100 subjects so far.<br> |

| + | '''Publications:''' | ||

| + | * Alipour, K., Schulze, J.P., Yao, Y., Ziskind, A., Burachas, G., [http://web.eng.ucsd.edu/~jschulze/publications/Alipour2020a.pdf "A Study on Multimodal and Interactive Explanations for Visual Question Answering"], In Proceedings of the Workshop on Artificial Intelligence Safety (SafeAI 2020), New York, NY, Feb 7, 2020 | ||

| + | * Alipour, K., Ray, A., Lin, X, Schulze, J.P., Yao, Y., Burachas, G.T., [http://web.eng.ucsd.edu/~jschulze/publications/Alipour2020b.pdf "The Impact of Explanations on AI Competency Prediction in VQA"], In Proceedings of the IEEE conference on Humanized Computing and Communication with Artificial Intelligence (HCCAI 2020), Sept 22, 2020 | ||

| + | </td> | ||

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | === | + | ===DataCube (Jurgen Schulze, Wanze Xie, Nadir Weibel, 2018-2019)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:datacube.jpg|250px]]</td> |

| − | <td> | + | <td>In this project for the Microsoft HoloLens we developed a [https://www.pwc.com/us/en/industries/health-industries/library/doublejump/bodylogical-healthcare-assistant.html Bodylogical]-powered augmented reality tool for the Microsoft HoloLens to analyze the health of a population such as the employees of a corporation.<br> |

| − | + | '''Publications:''' | |

| + | * Xie, W., Liang, Y., Johnson, J., Mower, A., Burns, S., Chelini, C., D'Alessandro, P., Weibel, N., Schulze, J.P., [http://web.eng.ucsd.edu/~jschulze/publications/Xie2020.pdf "Interactive Multi-User 3D Visual Analytics in Augmented Reality"], In Proceedings of IS&T The Engineering Reality of Virtual Reality, San Francisco, CA, January 30, 2020 | ||

| + | '''Videos:''' | ||

| + | * [https://drive.google.com/file/d/1Ba6uS9PFiHTh4IKcVwQWPgMGERNr-5Fd/view?usp=sharing Live demonstration] | ||

| + | * [https://drive.google.com/file/d/1_DcW8RZJvUhGmYEwIbLgJ2W0lWVdsLw7/view?usp=sharing Feature summary] | ||

| + | </td> </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | ===[[ | + | ===[[Catalyst]] (Tom Levy, Jurgen Schulze, 2017-2019)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:cavekiosk.jpg|250px]]</td> |

| − | <td>The goal of | + | <td>This collaborative grant with Prof. Levy is a UCOP funded $1.07M Catalyst project on cyber-archaeology, titled [https://ucsdnews.ucsd.edu/pressrelease/new_3_d_cavekiosk_at_uc_san_diego_brings_cyber_archaeology_to_geisel "3-D Digital Preservation of At-Risk Global Cultural Heritage"]. The goal was the development of software and hardware infrastructure to support the digital preservation and dissemination of 3D cyber-archaeology data, such as point clouds, panoramic images and 3D models.<br> |

| + | '''Publications:''' | ||

| + | * Levy, T.E., Smith, C., Agcaoili, K., Kannan, A., Goren, A., Schulze, J.P., Yago, G., [http://web.eng.ucsd.edu/~jschulze/publications/Levy2020.pdf "At-Risk World Heritage and Virtual Reality Visualization for Cyber- Archaeology: The Mar Saba Test Case"], In Forte, M., Murteira, H. (Eds.): "Digital Cities: Between History and Archaeology", April 2020, pp. 151-171, DOI: 10.1093/oso/9780190498900.003.0008 | ||

| + | * Schulze, J.P., Williams, G., Smith, C., Weber, P.P., Levy, T.E., "CAVEkiosk: Cultural Heritage Visualization and Dissemination", a chapter in the book "A Sense of Urgency: Preserving At-Risk Cultural Heritage in the Digital Age", Editors: Lercari, N., Wendrich, W., Porter, B., Burton, M.M., Levy, T.E., accepted by Equinox Publishing for publication in 2021</td> | ||

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | ===[[ | + | ===[[CalVR]] (Andrew Prudhomme, Philip Weber, Giovanni Aguirre, Jurgen Schulze, since 2010)=== |

<table> | <table> | ||

<tr> | <tr> | ||

| − | <td>[[Image: | + | <td>[[Image:Calvr-logo4-200x75.jpg|250px]]</td> |

| − | <td> | + | <td>CalVR is our virtual reality middleware (a.k.a. VR engine), which we have been developing for our graphics clusters. It runs on anything from a laptop to a large multi-node CAVE, and builds under Linux, Windows and MacOS. More information about how to obtain the code and build it can be found on our [[CalVR | CalVR page for software developers]].<br> |

| + | '''Publications:''' | ||

| + | * J.P. Schulze, A. Prudhomme, P. Weber, T.A. DeFanti, [http://web.eng.ucsd.edu/~jschulze/publications/Schulze2013.pdf "CalVR: An Advanced Open Source Virtual Reality Software Framework"], In Proceedings of IS&T/SPIE Electronic Imaging, The Engineering Reality of Virtual Reality, San Francisco, CA, February 4, 2013, ISBN 9780819494221</td> | ||

</tr> | </tr> | ||

</table> | </table> | ||

<hr> | <hr> | ||

| − | = | + | =<b>[[Past Projects|Older Projects]]</b>= |

| − | < | + | |

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

Latest revision as of 21:10, 9 September 2021

Most Recent Projects

Immersive Visualization Center (Jurgen Schulze, 2020-2021)

|

Dr. Schulze founded the Immersive Visualization Center (IVC) to bring together immersive visualization researchers and students with researchers from research domains which have a need for immersive visualization using virtual reality or augmented reality technologies. The IVC is located at UCSD's Qualcomm Institute. |

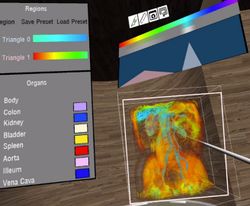

3D Medical Imaging Pilot (Larry Smarr, Jurgen Schulze, 2018-2021)

|

In this $1.7M collaborative grant with Dr. Smarr we have been working on developing software tools to support surgical planning, training and patient education for Crohn's disease. Publications:

Videos: |

XAI (Jurgen Schulze, 2017-2021)

| The effectiveness of AI systems is limited by the machine’s current inability to explain their decisions and actions to human users. The Department of Defense (DoD) is facing challenges that demand more intelligent, autonomous, and symbiotic systems. The Explainable AI (XAI) program aims to create a suite of machine learning techniques that produce more explainable models, while maintaining a high level of learning performance (prediction accuracy); and enable human users to understand, appropriately trust, and effectively manage the emerging generation of artificially intelligent partners. This project is a collaboration with the Stanford Research Institute (SRI) Princeton, NJ. Our responsibility is the development of the web interface, as well as in-person user studies, for which we recruited over 100 subjects so far. Publications:

|

DataCube (Jurgen Schulze, Wanze Xie, Nadir Weibel, 2018-2019)

|

In this project for the Microsoft HoloLens we developed a Bodylogical-powered augmented reality tool for the Microsoft HoloLens to analyze the health of a population such as the employees of a corporation. Publications:

Videos: |

Catalyst (Tom Levy, Jurgen Schulze, 2017-2019)

|

This collaborative grant with Prof. Levy is a UCOP funded $1.07M Catalyst project on cyber-archaeology, titled "3-D Digital Preservation of At-Risk Global Cultural Heritage". The goal was the development of software and hardware infrastructure to support the digital preservation and dissemination of 3D cyber-archaeology data, such as point clouds, panoramic images and 3D models. Publications:

|

CalVR (Andrew Prudhomme, Philip Weber, Giovanni Aguirre, Jurgen Schulze, since 2010)

|

CalVR is our virtual reality middleware (a.k.a. VR engine), which we have been developing for our graphics clusters. It runs on anything from a laptop to a large multi-node CAVE, and builds under Linux, Windows and MacOS. More information about how to obtain the code and build it can be found on our CalVR page for software developers. Publications:

|