Difference between revisions of "ArtifactVis with Android Device"

(→Screen Shots) |

(→Developers) |

||

| (54 intermediate revisions by one user not shown) | |||

| Line 1: | Line 1: | ||

===Project Overview=== | ===Project Overview=== | ||

| + | |||

| + | This project allow users to use the android device (as a client) to connect with CalVR (as a server) by the TCP protocol, then they can move any object and interact with it in the virtual world by using the android device. | ||

| + | It allow users to move objects on the CalVR by using any kind of mode such as Move World, Scale, Drive and Fly, and then see the object movement changes on the android device. Also, they can use the android device (client side) to move the camera around the object to see the object from all sides. Finally, they can use the android device (client side) for picking objects to receive pictures about this object. They can use all these techniques with Artifact Archeology project to interact with the historical objects in the real world. Also they can use this plug-in with other projects. | ||

| Line 14: | Line 17: | ||

** IP Address: Use the same IP address on the server side and put it on the code | ** IP Address: Use the same IP address on the server side and put it on the code | ||

** Use this PORT '''28888''' | ** Use this PORT '''28888''' | ||

| − | ** Use the same object that used in server side and put the object on the android memory card and wrtie this path on the code '''/mnt/sdcard/create_any_file name/object_name''' | + | ** Use the same object that is used in server side and put the object on the android memory card and wrtie this path on the code '''/mnt/sdcard/create_any_file name/object_name''' |

'''To compile and run the code do the following:''' | '''To compile and run the code do the following:''' | ||

| Line 27: | Line 30: | ||

'''CalVR''' | '''CalVR''' | ||

| − | After that Copy the client code to the eclips workspase and do compile to the Android ndk (C++) by using the following: | + | After that, Copy the client code to the eclips workspase and do compile to the Android ndk (C++) by using the following: |

*Go to this path on the terminal '''/home/saseeri/workspace/OSGTest1-AndroidClient''' | *Go to this path on the terminal '''/home/saseeri/workspace/OSGTest1-AndroidClient''' | ||

| Line 33: | Line 36: | ||

'''ndk-build''' | '''ndk-build''' | ||

Then go to the eclipse right click on the project then run it after you connect the android device | Then go to the eclipse right click on the project then run it after you connect the android device | ||

| + | |||

| + | *Click on Start Client | ||

| + | *Then Connect Server | ||

| + | |||

| + | |||

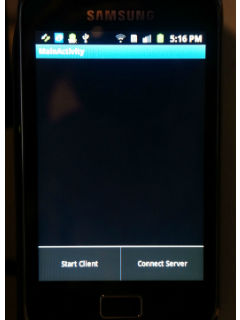

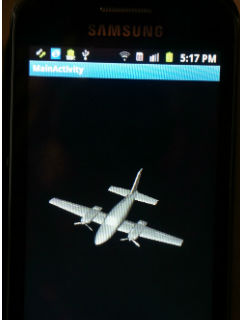

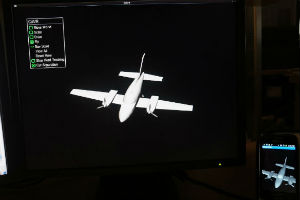

| + | [[Image:client.jpg]] [[Image:plane.jpg]] | ||

| Line 45: | Line 54: | ||

'''On Phone Device (Client):''' | '''On Phone Device (Client):''' | ||

| − | * | + | * Eclipse |

* Linux-sdk | * Linux-sdk | ||

* Android-ndk | * Android-ndk | ||

| Line 52: | Line 61: | ||

'''Equipment''' | '''Equipment''' | ||

*Android Device (I used Galaxy Phone Samsung) | *Android Device (I used Galaxy Phone Samsung) | ||

| + | |||

===Installing OSG for Android on Linux OS=== | ===Installing OSG for Android on Linux OS=== | ||

| − | This is the path to find | + | This is the path to find installation of OSG for Android on Linux OS |

'''/home/saseeri/CVRPlugins/calit2/MagicLens/Installing OSG for Android on Linux OS.pdf''' | '''/home/saseeri/CVRPlugins/calit2/MagicLens/Installing OSG for Android on Linux OS.pdf''' | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

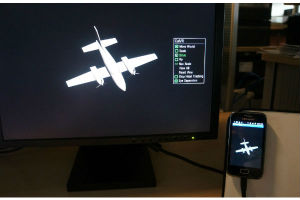

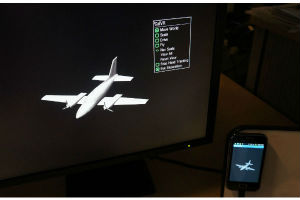

===Screen Shots=== | ===Screen Shots=== | ||

| − | + | [[Image:1.jpg]] [[Image:2.jpg]] [[Image:3.jpg]] | |

| − | + | [[Image:4.jpg]] [[Image:5.jpg]] [[Image:6.jpg]] | |

| − | |||

| − | |||

| − | + | We can translate the object from the android device by touch screen | |

| − | + | ||

| − | + | [[Image:translat11.jpg]] [[Image:translat22.jpg]] [[Image:translat33.jpg]] | |

| − | |||

| − | + | Also, we can rotate the object from the android device | |

| − | |||

| − | + | [[Image:rotate1.jpg]] [[Image:rotate2.jpg]] | |

| − | |||

| − | + | ===What is done so far ...=== | |

| + | * load model into Android Device and CalVR with osg | ||

| + | * Connect the Android Device and CalVR over wifi (by using TCP protocol) | ||

| + | * Move objects on the CalVR by using any kind of mode such as Move World, Scale, Drive and Fly, and then see the object movement changes on the android device | ||

| − | |||

| + | ===Future Work=== | ||

| + | * Move the camera around the object to see the object from all sides | ||

| + | * Picking objects to receive pictures about this object | ||

| − | + | ===Developers=== | |

| − | + | '''Software Developer''' | |

| + | * Sahar Aseeri | ||

| − | + | '''Project Advisor''' | |

| − | + | *Jurgen schulze | |

| − | + | ||

| − | + | ||

| − | ''' | + | |

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | * | + | |

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

Latest revision as of 18:08, 27 August 2012

Contents |

Project Overview

This project allow users to use the android device (as a client) to connect with CalVR (as a server) by the TCP protocol, then they can move any object and interact with it in the virtual world by using the android device. It allow users to move objects on the CalVR by using any kind of mode such as Move World, Scale, Drive and Fly, and then see the object movement changes on the android device. Also, they can use the android device (client side) to move the camera around the object to see the object from all sides. Finally, they can use the android device (client side) for picking objects to receive pictures about this object. They can use all these techniques with Artifact Archeology project to interact with the historical objects in the real world. Also they can use this plug-in with other projects.

Usage Instructions

- Server

- This is the path to find the server code /home/saseeri/CVRPlugins/calit2/MagicLens

- IP Address: Use this command line to find the IP address ifconfig

- Use this PORT 28888

- We can find objects on /home/saseeri/Development/OpenSceneGraph-Data folder

- Client

- This is the path to find the client code /home/saseeri/CVRPlugins/calit2/MagicLens, the name of the folder OSGTest1-AndroidClient

- IP Address: Use the same IP address on the server side and put it on the code

- Use this PORT 28888

- Use the same object that is used in server side and put the object on the android memory card and wrtie this path on the code /mnt/sdcard/create_any_file name/object_name

To compile and run the code do the following:

Start with the server

- Go to this path on the terminal /home/saseeri/CVRPlugins/calit2/MagicLens

Write on the terminal

make ... CalVR

After that, Copy the client code to the eclips workspase and do compile to the Android ndk (C++) by using the following:

- Go to this path on the terminal /home/saseeri/workspace/OSGTest1-AndroidClient

Write on the terminal

ndk-build

Then go to the eclipse right click on the project then run it after you connect the android device

- Click on Start Client

- Then Connect Server

Software Tools and Equipment

Software Tools

On PC (Server):

- Linux Operating System

- OpenSceneGraph 3.1

- CalVR

On Phone Device (Client):

- Eclipse

- Linux-sdk

- Android-ndk

- OpenSceneGraph 3.1 Android

Equipment

- Android Device (I used Galaxy Phone Samsung)

Installing OSG for Android on Linux OS

This is the path to find installation of OSG for Android on Linux OS

/home/saseeri/CVRPlugins/calit2/MagicLens/Installing OSG for Android on Linux OS.pdf

Screen Shots

We can translate the object from the android device by touch screen

Also, we can rotate the object from the android device

What is done so far ...

- load model into Android Device and CalVR with osg

- Connect the Android Device and CalVR over wifi (by using TCP protocol)

- Move objects on the CalVR by using any kind of mode such as Move World, Scale, Drive and Fly, and then see the object movement changes on the android device

Future Work

- Move the camera around the object to see the object from all sides

- Picking objects to receive pictures about this object

Developers

Software Developer

- Sahar Aseeri

Project Advisor

- Jurgen schulze