Difference between revisions of "Project3W21"

(→Set up the Room) |

(→Selection and Manipulation) |

||

| Line 26: | Line 26: | ||

To enable Oculus VR support in Unity, use the [https://assetstore.unity.com/packages/tools/integration/oculus-integration-82022 Oculus Integration package] from the Unity Asset Store. For SteamVR-compatible devices, there is a [https://assetstore.unity.com/packages/tools/integration/steamvr-plugin-32647 separate Unity asset]. For other VR systems there should also be assets available. Contact us if you can't make your headset work. | To enable Oculus VR support in Unity, use the [https://assetstore.unity.com/packages/tools/integration/oculus-integration-82022 Oculus Integration package] from the Unity Asset Store. For SteamVR-compatible devices, there is a [https://assetstore.unity.com/packages/tools/integration/steamvr-plugin-32647 separate Unity asset]. For other VR systems there should also be assets available. Contact us if you can't make your headset work. | ||

| − | ==Selection and Manipulation== | + | ==Selection and Manipulation (80 Points)== |

Write a 3D application which puts the user in a 3D model of the VR lab at 1:1 scale (i.e., life size). [[Media:Vrlab-fbx.zip | This ZIP file]] contains the room, as well as the furniture needed for this project. | Write a 3D application which puts the user in a 3D model of the VR lab at 1:1 scale (i.e., life size). [[Media:Vrlab-fbx.zip | This ZIP file]] contains the room, as well as the furniture needed for this project. | ||

Revision as of 11:11, 18 February 2021

Contents |

Homework Assignment 3: Classroom Design Tool

Prerequisites:

- Windows or Mac PC

- Unity

- GitHub Repo (accept from GitHub Classroom link)

- VR headset with two 3D-tracked controllers, such as the Oculus Quest 2, Rift (S), Vive, etc.

Learning objectives:

- Physics in Unity

- Selection and manipulation with virtual hand and ray-casting.

- Travel with the grabbing the air technique

For this assignment you can obtain 100 points, plus up to 10 points of extra credit.

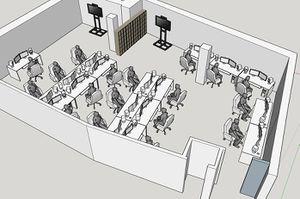

The goal of this assignment is to create a 3D application which can help with the design of a classroom such as UCSD's VR lab in the CSE building.

All interaction (except otherwise noted) has to be done with the VR system (headset and controllers). The user is not allowed to use keyboard or mouse.

We will give an introduction to this project in discussion on Monday, February 8th at 4pm.

Getting Unity ready for VR

To enable Oculus VR support in Unity, use the Oculus Integration package from the Unity Asset Store. For SteamVR-compatible devices, there is a separate Unity asset. For other VR systems there should also be assets available. Contact us if you can't make your headset work.

Selection and Manipulation (80 Points)

Write a 3D application which puts the user in a 3D model of the VR lab at 1:1 scale (i.e., life size). This ZIP file contains the room, as well as the furniture needed for this project.

- Initially, place the user roughly in the center of the empty lab room (without any of the furniture in it).

- Enable collision detection and physics in Unity and give the furniture as well as the room proper physics properties as well as colliders.

- Implement the following two 3D selection and manipulation methods: ray-casting and virtual hand interaction. Switch between them with the X button on your controllers.

- Each method needs to allow the user to create a piece of furniture, place it in the desired location, and rotate it to the desired orientation.

Those are the high-level goals. In detail you will need to deal with the following:

Set up the Room

- Load the VR lab model

- Place the user roughly in the center of the VR lab

- Make sure you can look around the room with your headset

Spawning of Furniture

When the user presses the A button on the controller, a chair is created at about 2 meters from the user's hand on the ray. When the user presses B, a desk is created. While the user holds down the spawn button, the desk/chair remains on the ray so that it can be positioned and oriented as desired. Once the button is released, the desk/chair falls down pulled by gravity and comes to rest on the floor or on another piece of furniture. Repeated pushes on A or B should create more pieces of furniture of that type.

Ray-Casting

- Display a line starting at your dominant hand's controller. The ray should point forward from the controller, much like a laser pointer would. The ray should be long enough to reach all of the walls of the lab.

- To select one of the desks or chairs in the room: find out which piece of furniture is intersected by the ray and highlight it. Update the highlight when the ray intersects a different piece of furniture. If the ray intersects multiple objects, highlight the object that is closest to the controller. Make sure you ignore the user's avatar for the intersection test. You can choose any highlighting method you would like, such as a wireframe box around the collider, a halo, change the object's color, make it pulsate, etc.

- Allow manipulation of the highlighted object when the user pulls the trigger button of the dominant hand's controller: move the object with the ray until the trigger button is released. The motion should resemble that of a marshmallow that you hold on a stick over a campfire. When the trigger button is released, the physics engine should take over and make the object fall down just like when initially spawned.

Virtual Hand

- Display a sphere by your dominant hand's controller. The sphere should be about 0.1 meters wide. Add a collider to the sphere.

- When the sphere collides with a piece of furniture, highlight it. When the user pulls the trigger on the controller, start moving the piece of furniture with the hand. This motion is very similar to ray-casting and should act much like ray-casting with a very short ray.

Travel

Implement the Grabbing the Air Technique for the user to move themselves through the classroom. Don't check for collisions but allow the user to go through furniture and walls.

Use the grab buttons for this functionality: on Oculus controllers they are at the middle fingers, on Vive or Microsoft XR controllers they are called grip buttons.

Extra Credit (10 Points)

You can choose between the following options for a maximum of 10 points of extra credit.

- Add an interaction mode for two-handed scaling of the entire world around you. By clicking and holding down both trigger buttons, the user can gradually scale up or down the entire room with all the furniture to work on a global scale (by scaling down) or to work more accurately (by scaling up). The room should change its scale proportionally to the distance between the controllers while the buttons are held. Add a function to reset to the initial 1:1 scale by pulling both trigger buttons simultaneously for less than about half a second. (4 points)

- Implement the Go-Go Hand technique and replace the regular virtual hand with it. In your demo video you do not need to show regular virtual hand interaction. (4 points)

- Allow saving and loading of the furniture configuration. You can use keyboard keys (such as 's' for save and 'l' for load). You need to save to a file and be able to load from the file after quitting the app and restarting it. (4 points)

- Create a 3D mini map of the room to interact with the furniture. Also add teleporting to wherever the user points in the mini map. (6 points)

Submission Instructions

Once you are done implementing the project, record a video demonstrating all the functionality you have implemented by creating various chairs and desks and place them around the room.

The video should be no longer than 5 minutes, and can be substantially shorter. The video format should ideally be MP4, but any other format the graders can view will also work.

While recording the video, record your voice explaining what aspects of the project requirements are covered. Record the video off the screen while you are wearing the VR headset.

To create the video you don't need to use video editing software.

- On any platform, you should be able to use Zoom to record a video.

- For Windows:

- Windows 10 has a built-in screen recorder

- If that doesn't work, the free OBS Studio is very good.

- On Macs you can use Quicktime.

Components of your submission:

- Video: Upload the video at the Assignment link on Canvas. Also add a text comment stating which functionality you have or have not implemented and what extra credit you have implemented. If you couldn't implement something in its entirety, please state which parts you did implement and expect to get points for.

- Example 1: I've done the base project with no issues. No extra credit.

- Example 2: Everything works except an issue with x: I couldn't get y to work properly.

- Example 3: Sections 1, 2 and 4 are fully implemented.

- Example 4: The base project is complete and I did z for extra credit.

- Executable: Build your Unity project into an Android .apk, Windows .exe file or the Mac equivalent and upload it to Canvas as zip file.

- Source code: Upload your Unity project to GitHub: either use the Unity repository initialized from GitHub Classroom or any GitHub repository that you might set up on your own. Make sure you use the .gitignore file for Unity that is included in the repo so that only project sources are uploaded (the .gitignore file goes in the root folder of your project). Then submit the GitHub link to Gradescope by using 'Submission Method: GitHub', along with your Repository link and the respective GitHub branch. (Note: GitHub Classroom is only for starter code distribution, not for grading. Since we don't have any starter code for HW1, you can set up your own repo with Unity .gitignore file we provided and submit to Gradescope too.)

In Summary, submit to Canvas with 1. Video and 2. Zipped Executable files (.exe, .dll, .apk, etc.), and submit to Gradescope with 3. Source Code.