Difference between revisions of "Project2S20"

(Created page with "=Levels of Immersion= '''THIS PROJECT IS UNDER CONSTRUCTION. DO NOT START YET.''' In this project we are going to explore different levels of immersion with the Oculus Rift....") |

(→Extra Credit (up to 10 points)) |

||

| (49 intermediate revisions by 2 users not shown) | |||

| Line 1: | Line 1: | ||

=Levels of Immersion= | =Levels of Immersion= | ||

| − | + | Due Date: Sun, May 10 @ 11:59 PM PST | |

| − | In this project we are going to explore different levels of immersion with | + | In this project we are going to explore different levels of immersion with your VR headset. We are using Unity with C# for this project again like in project 1. |

| − | + | We recommend starting with your code from project 1 and add the relevant sections for the cubes and the sky box. | |

| − | == | + | ==Milestones== |

| − | + | * Week 1: Students should changed the sky box to 3D, added a custom object, and menu built in the scene. | |

| + | * Week 2: Students should have implemented the menu functionality as well and the stereo mode rendering. | ||

| + | * Week 3: Students should have the remaining functionality implemented. | ||

==Project Description (100 Points)== | ==Project Description (100 Points)== | ||

| − | You need to add the following features to | + | You need to add the following features to your project, and '''make it work on your VR headset''' (smarphone-based or on your PC VR headset such as Oculus, Vive, etc.). |

| − | + | Starter Files: [[Media:P2Resources.zip|Download]] | |

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | ''' | + | # '''3D Sky box''' ('''15 Points''') The sky box in Unity is monoscopic by default, which makes it look flat like a poster on a wall. This is fine for far away objects such as mountains and trees but doesn't look right for a closer environment. Create a seteroscopic sky box using these [[Media:bear-stereo-cubemaps-1k.zip|skybox textures]] and the custom shader provided here. (Alternatively you can use these [https://drive.google.com/file/d/1rj40Uo-bJC_Ch9uJ_nXn0TTyt7F1o-FA/view?usp=sharing higher resolution skybox textures].) Here is a [https://medium.com/@mheavers/implementing-a-stereo-skybox-into-unity-for-virtual-reality-e427cf338b06 great tutorial on 3D skyboxes in Unity]. |

| − | + | # '''Custom Object''' ('''5 Points''') Create or add a custom asset that will be used in your project. You can simply create a cube and apply a texture or create your own. You will need to use a custom shader for this project though, so you would need to convert the materials to work with this shader. You can place this wherever you wish in the scene. | |

| − | + | # '''Gaze Interaction Menu''' ('''10 Points''') Create a menu with buttons that you can interact with using the gaze system. This will provide the controls for the following options. A texture is provided that you can apply on a plane if you don't feel like creating your own or adding text. You can place this wherever you wish in the scene. | |

| − | + | # '''Stereo Modes''' ('''15 Points''') Implement the functionality to change the rendering mode from Stereo to Mono, Left Eye Only, and Right Eye Only. The provided class and material shaders will be required to implement this. The scene should render in only one of these modes at a given time and should support other functionality while rendering in any mode. | |

| + | # '''Rendering Scale''' ('''15 Points''') Implement the functionality to change the rendering scale of your custom object. Support at least half size (0.5x scale) to double size (2.0x scale) as well as a reset button (1.0x scale). Make sure the object doesn't move (position and rotation) and only scales in size. | ||

| + | # '''Head Tracking''' ('''10 Points''') Implement the functionality to disable and enable head tracking (position and rotation). | ||

| + | # '''Variable IOD''' ('''15 Points''') Implement the functionality to change the default interocular distance (IOD). Each eye has an offset from the central position, support at least the range of -0.1m to +0.3m with a reset button. This is not a supported operation by default in Unity so it's okay if things don't look exactly right or break some other aspects (such as the reticle or gaze system). | ||

| + | # '''Rendering Lag''' ('''15 Points''') Implement the functionality to simulate rendering lag. This is what it would feel like if a frame look longer than the allotted time to render and same image was used for more than one frame. Support 1/2 FPS (30FPS usually), 1/4 FPS (15FPS usually), and 1/10 FPS (6FPS Usually) as well as a reset button. | ||

| − | ''' | + | |

| − | * | + | ===Tips for Rendering the Skybox=== |

| − | * | + | |

| − | * | + | There are six cube map images in JPG format in the ZIP file. Each is 1k x 1k pixels in size. The files are named nx, ny and nz for the negative x, y and z axis images. The positive axis files are named px, py and pz. Here is a downsized picture of the panorama image: |

| + | |||

| + | [[Image:bear-thumb.jpg|512px]] | ||

| + | |||

| + | And this is how the cube map faces are labeled: | ||

| + | |||

| + | [[Image:Bear-left-cubemap-labeled.jpg|512px]] | ||

| + | |||

| + | The panorama was shot with camera lenses parallel to one another, so the resulting cube maps will need to be separated by a human eye distance when rendered, i.e., their physical placement needs to be horizontally offset from each other for each eye. | ||

| + | |||

| + | ===Submission Instructions=== | ||

| + | Finally, record a video demostrating all the functionality you have implemented. | ||

| + | # 3D Skybox - You can just look around to show that the sky box is in 3D. | ||

| + | # Custom Object - Make sure it's in the video | ||

| + | # Gaze Interaction Menu - Make sure it's in the video | ||

| + | # Stereo Modes - Make sure you record your video in stereo (both eyes) to show the effects | ||

| + | # Rendering Scale - Look at your object before and after interacting with the functionality | ||

| + | # Head Tracking - If using mobile or headset: Just stay on the option for a while to show that the HMD isn't being tracked anymore. If recording in Unity, show your mouse pointer trying to move the scene around. | ||

| + | # Variable IOD - Make sure you record your video in Stereo to show the effects | ||

| + | # Rendering Lag - After selecting one of the options, look around the scene to show that there are duplicate frames being showed (You will have to record your video at the same framerate as your display's refresh.) | ||

| + | |||

| + | Upload this video to Canvas, and comment which functionality you have or have not implemented or and what extra credit you have implemented. If you couldn't implement something in its entirety, please state any issues you have with it. | ||

| + | |||

| + | * Example 1: I've done the base project with no issues. No extra credit. | ||

| + | * Example 2: Everything works except an issue with '''x''', I couldn't get '''y''' to work properly. | ||

| + | * Example 3: Sections 1-3, 5-6, and 8 work. | ||

| + | * Example 4: The base project is complete and I did '''z''' for extra credit. | ||

| + | |||

| + | |||

| + | To create the video you don't need to use video editing software, but you should use software to capture your screen to a video file. | ||

| + | |||

| + | * If you're using a PC-linked VR headset: [https://obsproject.com/ OBS Studio] is available free of charge. | ||

| + | * On Iphones screen recording should be easy [https://support.apple.com/en-us/HT207935 with these instructions]. | ||

| + | * Newer Samsung Galaxy phones with the Game Tools feature have a built-in screen recording mode that is disabled by default. Find out [https://www.androidcentral.com/how-use-game-tools-samsung-galaxy-s7 here] how to enable and use it. | ||

| + | * The [https://play.google.com/store/apps/details?id=com.rsupport.mvagent Mobizen Screen Recorder app] should work on any Android phone. The free version comes with a watermark, which is acceptable. | ||

==Extra Credit (up to 10 points)== | ==Extra Credit (up to 10 points)== | ||

| − | There are | + | There are three options for extra credit, for a total of 10 points maximum. |

| − | # ''' | + | # '''Viewmaster Simulator:''' Take two regular, non-panoramic photos from an eye distance apart (about 65mm) with a regular camera such as the one in your cell phone. Use the widest angle your camera can be set to, as close to a 90 degree field of view as you can get. Cut the edges off to make the images square and exactly the same size. Use your custom images as the textures on a rectangle for each eye. You may have to shift one of the images left or right see a correct stereo image that doesn't hurt your eyes. ('''5 points''') |

| − | # '''Custom Sky Box:''' Create your own (monoscopic) sky box: | + | # '''Custom Sky Box:''' Create your own (monoscopic) sky box: use your cell phone's panorama function to capture a 360 degree panorama picture (or use Google's StreetView app, which is free for Android and iPhone). Process it into cube maps - [https://jaxry.github.io/panorama-to-cubemap/ this on-line conversion tool] can do this for you. Texture the sky box with the resulting textures. Note you'll have to download each of the six textures separately. Make it an alternate option to the Bear image. ('''5 points''') |

# '''Super-Rotation:''' Modify the regular orientation tracking so that it exaggerates horizontal head rotations by a factor of two. This means that starting when the user's head faces straight forward, any rotation to left or right (=heading) is multiplied by two and this new head orientation is used to render the image. Do not modify pitch or roll. In this mode the user will be able to look behind them by just rotating their head by 90 degrees to either side. Get this mode to work with your skybox and calibration cubes, tracking fully on, and correct stereo rendering. [https://ieeexplore.ieee.org/document/7547900 This publication] gives more information about this technique. ('''5 points''') | # '''Super-Rotation:''' Modify the regular orientation tracking so that it exaggerates horizontal head rotations by a factor of two. This means that starting when the user's head faces straight forward, any rotation to left or right (=heading) is multiplied by two and this new head orientation is used to render the image. Do not modify pitch or roll. In this mode the user will be able to look behind them by just rotating their head by 90 degrees to either side. Get this mode to work with your skybox and calibration cubes, tracking fully on, and correct stereo rendering. [https://ieeexplore.ieee.org/document/7547900 This publication] gives more information about this technique. ('''5 points''') | ||

| − | # ''' | + | # '''Inverted Stereo Rendering''' Extend your stereo modes to include an inverted stereo mode, this means that the image that is usually rendered to the left eye will now be displayed on the right eye and vice versa. ('''5 points''') |

Latest revision as of 11:15, 11 May 2020

Contents |

Levels of Immersion

Due Date: Sun, May 10 @ 11:59 PM PST

In this project we are going to explore different levels of immersion with your VR headset. We are using Unity with C# for this project again like in project 1.

We recommend starting with your code from project 1 and add the relevant sections for the cubes and the sky box.

Milestones

- Week 1: Students should changed the sky box to 3D, added a custom object, and menu built in the scene.

- Week 2: Students should have implemented the menu functionality as well and the stereo mode rendering.

- Week 3: Students should have the remaining functionality implemented.

Project Description (100 Points)

You need to add the following features to your project, and make it work on your VR headset (smarphone-based or on your PC VR headset such as Oculus, Vive, etc.).

Starter Files: Download

- 3D Sky box (15 Points) The sky box in Unity is monoscopic by default, which makes it look flat like a poster on a wall. This is fine for far away objects such as mountains and trees but doesn't look right for a closer environment. Create a seteroscopic sky box using these skybox textures and the custom shader provided here. (Alternatively you can use these higher resolution skybox textures.) Here is a great tutorial on 3D skyboxes in Unity.

- Custom Object (5 Points) Create or add a custom asset that will be used in your project. You can simply create a cube and apply a texture or create your own. You will need to use a custom shader for this project though, so you would need to convert the materials to work with this shader. You can place this wherever you wish in the scene.

- Gaze Interaction Menu (10 Points) Create a menu with buttons that you can interact with using the gaze system. This will provide the controls for the following options. A texture is provided that you can apply on a plane if you don't feel like creating your own or adding text. You can place this wherever you wish in the scene.

- Stereo Modes (15 Points) Implement the functionality to change the rendering mode from Stereo to Mono, Left Eye Only, and Right Eye Only. The provided class and material shaders will be required to implement this. The scene should render in only one of these modes at a given time and should support other functionality while rendering in any mode.

- Rendering Scale (15 Points) Implement the functionality to change the rendering scale of your custom object. Support at least half size (0.5x scale) to double size (2.0x scale) as well as a reset button (1.0x scale). Make sure the object doesn't move (position and rotation) and only scales in size.

- Head Tracking (10 Points) Implement the functionality to disable and enable head tracking (position and rotation).

- Variable IOD (15 Points) Implement the functionality to change the default interocular distance (IOD). Each eye has an offset from the central position, support at least the range of -0.1m to +0.3m with a reset button. This is not a supported operation by default in Unity so it's okay if things don't look exactly right or break some other aspects (such as the reticle or gaze system).

- Rendering Lag (15 Points) Implement the functionality to simulate rendering lag. This is what it would feel like if a frame look longer than the allotted time to render and same image was used for more than one frame. Support 1/2 FPS (30FPS usually), 1/4 FPS (15FPS usually), and 1/10 FPS (6FPS Usually) as well as a reset button.

Tips for Rendering the Skybox

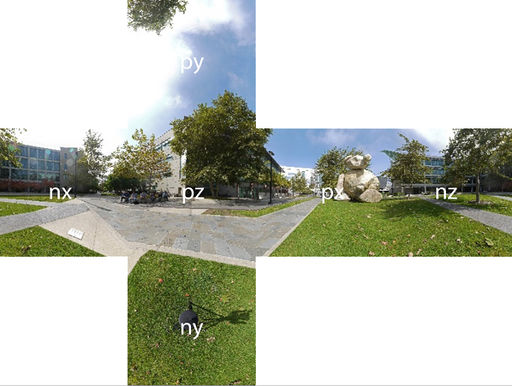

There are six cube map images in JPG format in the ZIP file. Each is 1k x 1k pixels in size. The files are named nx, ny and nz for the negative x, y and z axis images. The positive axis files are named px, py and pz. Here is a downsized picture of the panorama image:

And this is how the cube map faces are labeled:

The panorama was shot with camera lenses parallel to one another, so the resulting cube maps will need to be separated by a human eye distance when rendered, i.e., their physical placement needs to be horizontally offset from each other for each eye.

Submission Instructions

Finally, record a video demostrating all the functionality you have implemented.

- 3D Skybox - You can just look around to show that the sky box is in 3D.

- Custom Object - Make sure it's in the video

- Gaze Interaction Menu - Make sure it's in the video

- Stereo Modes - Make sure you record your video in stereo (both eyes) to show the effects

- Rendering Scale - Look at your object before and after interacting with the functionality

- Head Tracking - If using mobile or headset: Just stay on the option for a while to show that the HMD isn't being tracked anymore. If recording in Unity, show your mouse pointer trying to move the scene around.

- Variable IOD - Make sure you record your video in Stereo to show the effects

- Rendering Lag - After selecting one of the options, look around the scene to show that there are duplicate frames being showed (You will have to record your video at the same framerate as your display's refresh.)

Upload this video to Canvas, and comment which functionality you have or have not implemented or and what extra credit you have implemented. If you couldn't implement something in its entirety, please state any issues you have with it.

- Example 1: I've done the base project with no issues. No extra credit.

- Example 2: Everything works except an issue with x, I couldn't get y to work properly.

- Example 3: Sections 1-3, 5-6, and 8 work.

- Example 4: The base project is complete and I did z for extra credit.

To create the video you don't need to use video editing software, but you should use software to capture your screen to a video file.

- If you're using a PC-linked VR headset: OBS Studio is available free of charge.

- On Iphones screen recording should be easy with these instructions.

- Newer Samsung Galaxy phones with the Game Tools feature have a built-in screen recording mode that is disabled by default. Find out here how to enable and use it.

- The Mobizen Screen Recorder app should work on any Android phone. The free version comes with a watermark, which is acceptable.

Extra Credit (up to 10 points)

There are three options for extra credit, for a total of 10 points maximum.

- Viewmaster Simulator: Take two regular, non-panoramic photos from an eye distance apart (about 65mm) with a regular camera such as the one in your cell phone. Use the widest angle your camera can be set to, as close to a 90 degree field of view as you can get. Cut the edges off to make the images square and exactly the same size. Use your custom images as the textures on a rectangle for each eye. You may have to shift one of the images left or right see a correct stereo image that doesn't hurt your eyes. (5 points)

- Custom Sky Box: Create your own (monoscopic) sky box: use your cell phone's panorama function to capture a 360 degree panorama picture (or use Google's StreetView app, which is free for Android and iPhone). Process it into cube maps - this on-line conversion tool can do this for you. Texture the sky box with the resulting textures. Note you'll have to download each of the six textures separately. Make it an alternate option to the Bear image. (5 points)

- Super-Rotation: Modify the regular orientation tracking so that it exaggerates horizontal head rotations by a factor of two. This means that starting when the user's head faces straight forward, any rotation to left or right (=heading) is multiplied by two and this new head orientation is used to render the image. Do not modify pitch or roll. In this mode the user will be able to look behind them by just rotating their head by 90 degrees to either side. Get this mode to work with your skybox and calibration cubes, tracking fully on, and correct stereo rendering. This publication gives more information about this technique. (5 points)

- Inverted Stereo Rendering Extend your stereo modes to include an inverted stereo mode, this means that the image that is usually rendered to the left eye will now be displayed on the right eye and vice versa. (5 points)